Just as regular expressions and finite automata happened to have a correspondence, so do context-free grammars and pushdown automata. As with the proofs of Kleene's theorem, we will take advantage of the fact that PDAs that accept via final state are equivalent to those that accept by empty stack. We will show that CFGs can be converted to PDAs that accept via empty stack and vice-versa. Since we already showed that these are equivalent with PDAs that accept via final state, we effectively show that these are equivalent to CFGs too.

Theorem 6.13. Let $G$ be a context-free grammar. Then there exists a pushdown automaton $M$ such that $N(M) = L(G)$.

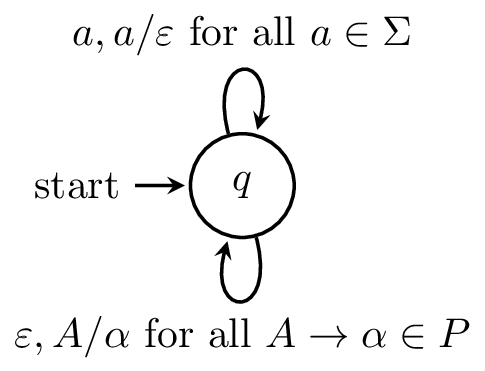

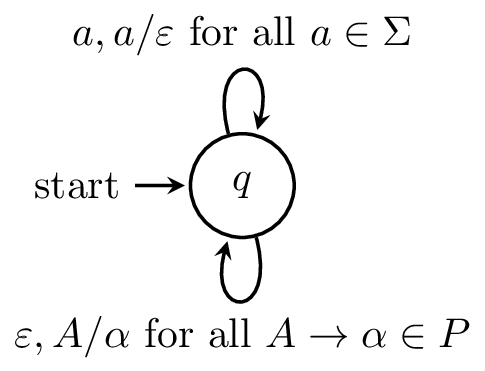

Proof. Let $G = (V,\Sigma,P,S)$ be a CFG. We will create a PDA $M = (Q,\Sigma,\Gamma, \delta, q_0, Z_0)$ which accepts by empty stack defined as follows:

Here is what the machine looks like.

Now, we will show that $L(G) \subseteq N(M)$. Let $w \in L(G)$. There exists a leftmost derivation for $w$ $$S = \gamma_1 \Rightarrow \gamma_2 \Rightarrow \cdots \Rightarrow \gamma_n = w,$$ where $\gamma_i \in (V \cup \Sigma)^*$ for $1 \leq i \leq n$. Since this is a leftmost derivation, we can write $\gamma_i = x_i \alpha_i$, where $x_i \in \Sigma^*$ and $\alpha_i \in V(V \cup \Sigma)^*$ for $1 \leq i \leq n-1$. In other words, $\alpha_i$ begins with the leftmost variable of $\gamma_i$. Furthermore, $x_i$ must be a prefix of $w$ and for each $x_i$, we can write $w = x_i y_i$ for some $y_i \in \Sigma^*$.

We will show by induction on $i$, that $(q,w,S) \vdash^* (q,y_i,\alpha_i)$ on the PDA $M$ for all $1 \leq i \leq n$.

For our base case, we take $i = 1$. We have $x_1 = \varepsilon$, $y_1 = w$, and $\alpha_1 = S$. Thus, we have $(q,w,S) \vdash^* (q,y_i,\alpha_i)$ trivially.

For our inductive hypothesis, we assume that $(q,w,S) \vdash^* (q,y_j,\alpha_j)$ for all $j < i$. We can apply this to get $(q,w,S) \vdash^* (q,y_{i-1},\alpha_{i-1})$, where $x_{i-1} y_{i-1}$, $\alpha_{i-1} = A_{i-1} \eta_{i-1}$ for some $\eta_{i-1} \in (V \cup \Sigma)^*$, and $\gamma_{i-1} = x_{i-1} \alpha_{i-1}$.

Since we are considering the leftmost derivation, there must exist a production $A_{i-1} \to \beta_{i-1}$ in $G$ for some $\beta_{i-1} \in (V \cup \Sigma)^*$. By our definition of $M$, there is a transition with $(q,\beta_{i-1}) \in \delta(q,\varepsilon,A_{i-1})$. Therefore, we have $(q,y_{i-1},\alpha_{i-1}) \vdash (q,y_{i-1},\beta_{i-1}\eta_{i-1})$.

Now, write $y_{i-1} = y_{i-1}' y_i$ and $\beta_{i-1} \eta_{i-1} = y_{i-1}' \alpha_i$, where $y_{i-1}' \in \Sigma^*$. We can read $y_{i-1}'$ via transitions $\delta(q,a,a) = \{(q,\varepsilon)\}$ for all $a \in \Sigma$ to give us $(q,w,S) \vdash^* (q,y_i,\alpha_i)$. Thus, we have shown that for each $i$, we have $(q,w,S) \vdash^* (q,y_i,\alpha_i)$. Then taking $i = n$, we have $$(q,w,S) \vdash^* (q,y_n,\alpha_n) = (q,\varepsilon,\varepsilon)$$ and thus $w \in N(M)$ as desired.

Next, we will show that $N(M) \subseteq L(G)$. Let $w \in N(M)$. We will show that for any variable $A \in V$, if $(q,w,A) \vdash^* (q,\varepsilon,\varepsilon)$, then $A \Rightarrow^* w$. We will show this by induction on $n$, then length of an accepting computation $(q,w,A) \vdash^* (q,\varepsilon,\varepsilon)$.

For our base case, we have $n = 1$, since $A \neq \varepsilon$. Since $A$ is at the top of the stack, we must choose a transition of the form $\delta(q,\varepsilon,A)$. However, this means that $w = \varepsilon$ and $(q,\varepsilon) \in \delta(q,\varepsilon,A)$ and this implies that there is a rule $A \to \varepsilon$ in $G$. Thus, we have $A \Rightarrow^* w$.

Now, suppose that $n > 1$ and for our inductive hypothesis, we assume that for all variables $A \in V$, if the length of a computation $(q,w,A) \vdash^* (q,\varepsilon,\varepsilon)$ of $M$ is fewer than $n$ steps, then $A \Rightarrow^* w$. Consider a computation $(q,w,A) \vdash^* (q,\varepsilon,\varepsilon)$ of length $n$.

Again, we must first take a transition $\delta(q,\varepsilon,A)$ since $A$ is on the top of the stack. This time, we take the transition corresponding to a rule of the form $A \to \alpha_1 \cdots \alpha_k$, where $\alpha_1, \dots, \alpha_k \in (V \cup \Sigma)$, and we have $(q,w,A) \vdash (q,w,\alpha_1 \cdots \alpha_k)$. From here, consider the following computation, $$(q,w,A) \vdash (q,w,\alpha_1 \cdots \alpha_k) \vdash^* (q,w',\alpha_2 \cdots \alpha_k)$$ where $w'$ is a suffix of $w$ with $w = w_1 w'$.

In other words, we have $(q,w_1 w',\alpha_1 \cdots \alpha_k) \vdash^* (q,w',\alpha_2 \cdots \alpha_k)$. Now, we make use of the following observation: the computation $$(q,w_1,\alpha_1 \cdots \alpha_k) \vdash^* (q,\varepsilon,\alpha_2 \cdots \alpha_k)$$ is also a valid computation in $M$. The reasoning is simple: the computation clearly does not depend on $w'$, since none of $w'$ was read. If our input word were simply $w_1$, we would have exactly the above computation. However, this leads us to another similarly useful observation we can make: the computation $$(q,w_1,\alpha_1) \vdash^* (q,\varepsilon,\varepsilon)$$ is also a valid computation in $M$. The reasoning is similar: if we began our computation of $w_1$ with only $\alpha_1$ on the stack, we would arrive at the same configuration since $\alpha_2 \cdots \alpha_k$ were inconsequential to the computation.

Now, we observe that we have one of two possibilities. If $\alpha_1 \in \Sigma$, then $(q,w_1,\alpha_1) \vdash (q,\varepsilon,\varepsilon)$ implies that $w_1 = \alpha_1$ and we have $\alpha_1 \Rightarrow^* w_1$ trivially. Otherwise, we have $\alpha_1 \in V$ and since $(q,w_1,\alpha_1) \vdash (q,\varepsilon,\varepsilon)$ is a computation of length less than $n$, we have $\alpha_1 \Rightarrow^* w_1$ by the inductive hypothesis.

We can now return to the computation $$(q,w,A) \vdash (q,w,\alpha_1 \cdots \alpha_k) \vdash^* (q,w',\alpha_2 \cdots \alpha_k)$$ and observe that we have $$(q,w,A) \vdash (q,w_i w',\alpha_i \cdots \alpha_k) \vdash^* (q,w',\alpha_{i-1} \cdots \alpha_k)$$ for every $1 \leq i \leq k$ and we can apply a similar argument to obtain derivations $\alpha_i \Rightarrow^* w_i$ for $w = w_1 \cdots w_k$. This gives us our derivation, $$A \Rightarrow \alpha_1 \cdots \alpha_k \Rightarrow^* w_1 \alpha_2 \cdots \alpha_k \Rightarrow^* w_1 w_2 \alpha_3 \cdots \alpha_k \Rightarrow^* w_1 \cdots w_k = w$$ and thus $A \Rightarrow^* w$. Taking $A = S$, we have $S \Rightarrow^* w$ and $w \in L(G)$ as desired. $$\tag*{$\Box$}$$

Now, we'll consider the other direction. We won't go through all the details this time.

Theorem 6.14. Let $M$ be a pushdown automaton. Then there exists a context-free grammar $G$ such that $L(G) = N(M)$.

Proof (sketch). Let $M = (Q,\Sigma,\Gamma,\delta,q_0,Z_0)$ be a PDA that accepts by empty stack. We want to simulate the process of performing a computation on $M$ via a grammar $G$. Consider the first step of such a computation $$(q,au,X) \vdash (q',u,Y_1 \cdots Y_k)$$ where $q,q' \in Q$, $u \in \Sigma^*$, $a \in \Sigma \cup \{\varepsilon\}$, and $X, Y_1, \dots, Y_K \in \Gamma$.

In this computation, we follow the transition $(q',Y_1 \cdots Y_k) \in \delta(q,a,X)$. Note that $a \in \Sigma \cup \{\varepsilon\}$. Then the computation proceeds and at some point we end up in a configuration $(q_1,u_1,Y_2 \cdots Y_k)$. We continue the computation, removing each $Y_i$ and consuming the input word until we end up in a configuration $(r,\varepsilon,\varepsilon)$ for some state $r \in Q$.

We will construct a CFG $G = (V,\Gamma,P,S)$ that generates $N(M)$. First, we define the set of variables $V$ by $$ V = \{S\} \cup \{A_{q,X,r} \mid q,r \in Q, X \in \Gamma\}.$$ For clarity, we will write $[q,X,r]$ for $A_{q,X,r}$ in the rest of the proof.

The idea is that from $[q,X,r]$, one can construct a derivation $[q,X,r] \Rightarrow^* w$ for a word $w$ if and only if $(q,w,X) \vdash^* (r,\varepsilon,\varepsilon)$. In a sense, the variable $[q,X,r]$ represents the computation from $q$ to $r$ on input $w$ with $X$ on the stack. We then need to define our set of productions appropriately.

Consider a transition $(r,Y_1 \cdots Y_k) \in \delta(q,a,X)$ as above. If what we have is $(r,\varepsilon)$, then we add the production $$[q,X,r] \to a.$$ This represents the action of popping $X$ off the stack, reading a symbol $a$, and moving to state $r$.

If $k \geq 1$, then we add productions of the form $$[q,X,r_k] \to a[r,Y_1,r_1] [r_1,Y_2,r_2] \cdots [r_{k-1},Y_k,r_k]$$ for all possible $r_1, \dots, r_k \in Q$. This represents the action of reading a symbol $a$, popping $X$ from the stack, pushing $Y_1 \cdots Y_k$ on to the stack, and considering all possible sequences of states $r_1, \dots, r_k$ such that we can pop $Y_1 \cdots Y_k$, while moving to state $r$.

Since we want to generate all words such that the PDA $M$ reaches a configuration with an empty stack, we add transitions for all states $q \in Q$ and the start state $q_0$ $$S \to [q_0,Z_0,q].$$ From this, we have $(q_0,w,Z_0) \vdash^* (q,\varepsilon,\varepsilon)$ if and only if $S \Rightarrow [q_0,Z_0,q] \Rightarrow^* w$. $$\tag*{$\Box$}$$