When we were talking about translating English statements into propositional logic, a lot of you mentioned some formulas that were almost the same but not quite what the statement was saying. Of course, getting to this point in a computer science degree, you will have some understanding of the semantics of Boolean logic and so many of you tried applying what you knew to those translations. Here, we need to make a distinction between equality and logical equivalence.

Definition 5.1. We say two well-formed formulas $\alpha$ and $\beta$ are logically equivalent if $\alpha^t = \beta^t$ for every valuation $t$.

Note that the concept of logical equivalence is distinct from equality. When we say that two formulas are equal, we mean they are literally exactly the same. In other words, equality is a property of the formula's syntax (does it contain the same symbols in the same order?), but not its semantics (do the two formulas have the same value under the same valuation?). Often when we think we want to talk about whether two formulas are equal, we really want to know whether they mean the same thing, which is logical equivalence.

There are slides in the official course notes that have these identities laid out as a handy reference. Since HTML and MathJax don't quite lend themselves to such formatting, I'll comment a bit about each identity as well.

Definition 5.2. (Logical identities)

Here, we can finally make use of your instincts that were honed from years of algebra to apply substitutions and transformations to logical formulas. For this reason, these kinds of proofs can be called transformational proofs.

Example 5.3. We want to show that $(((\neg p) \wedge q) \vee p) \equiv (p \vee q)$. \begin{align*} (((\neg p) \wedge q) \vee p) & \equiv ((( \neg p) \vee p) \wedge (q \vee p)) & \text{(Distributivity)} \\ & \equiv (T \wedge (q \vee p)) & \text{(Excluded middle)} \\ & \equiv (q \vee p) & \text{(Simplification I)} \\ & \equiv (p \vee q) & \text{(Commutativity)} \end{align*}

It's not hard to see that $\equiv$ is an equivalence relation (in the general sense), so it is symmetric, reflexive, and transitive. This means that if one wishes to show a logical equivalence, you can start on either side.

Example 5.4. We will show $((p \wedge q) \vee (q \wedge r)) \equiv (q \wedge (p \vee r))$. \begin{align*} (q \wedge (p \vee r)) & \equiv ((q \wedge p) \vee (q \wedge r)) & \text{(Distributivity)} \\ & \equiv ((p \wedge q) \vee (q \wedge r)) & \text{(Commutativity)} \end{align*}

Because of this, keep in mind that we can also apply the rules in both directions, as this next example will show.

Example 5.5 We will show that the following version of Simplification II holds, $(p \vee (p \wedge q)) \equiv p$. \begin{align*} (p \vee (p \wedge q)) & \equiv ((p \wedge T) \vee (p \wedge q)) & \text{(Simplification I)} \\ & \equiv (p \wedge (T \vee q)) & \text{(Distributivity)} \\ & \equiv (p \wedge T) & \text{(Simplification I)} \\ & \equiv p & \text{(Simplification I)} \end{align*}

Now, how can we show that two formulas are not logically equivalent? It just takes finding a single valuation where the outcomes of the two formulas disagree.

We can apply these ideas to some simple applications that you may have already encountered.

The most common place we run into logic when coding is in conditionals. Let's consider the following code:

We can assign propositional variables for each condition we wish to test. Then by combining the propositional variables to express each conditional into propositional formulas, we can very easily see whether certain blocks are ever reached or whether two pieces of code have the same behaviour.

Example 5.6. Let's examine the following pieces of code.

| Code snippet A | Code snippet B | |

|---|---|---|

|

|

We can express the conditions under which each block gets run.

| Code snippet A | Code snippet B | |

|---|---|---|

|

|

Now, we can show that the conditionals for each block are logically equivalent.

There are other kinds of problems that can arise from this sort of setting. For instance, one can show that a certain block of code is never reachable, in which case the task would be to show that the conditional statement leading to that block is logically equivalent to $F$. Or perhaps, we can show that a block is reachable, which is the same as determining whether the conditional for that block is satisfiable.

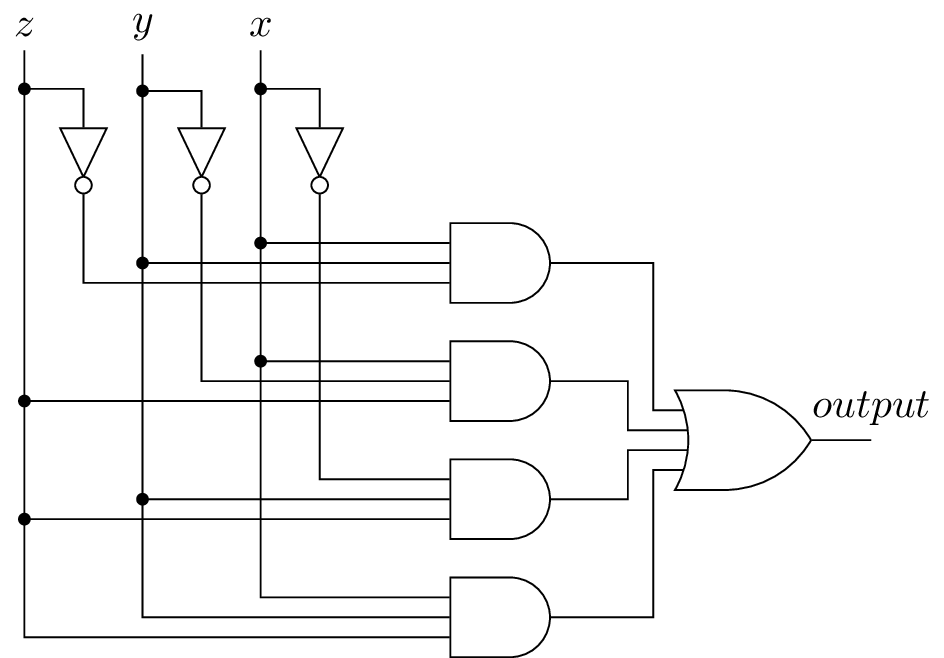

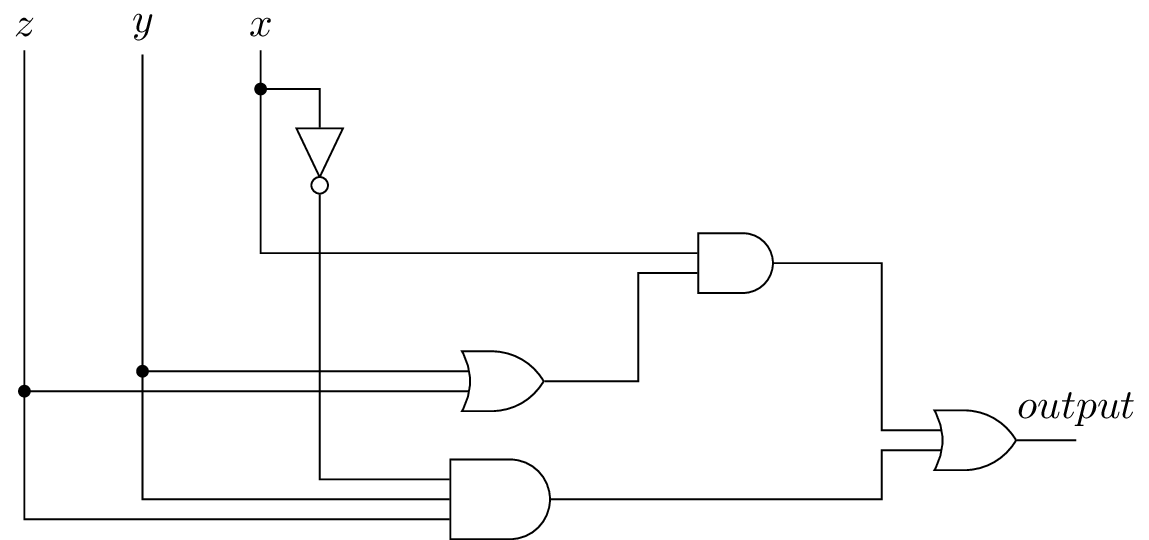

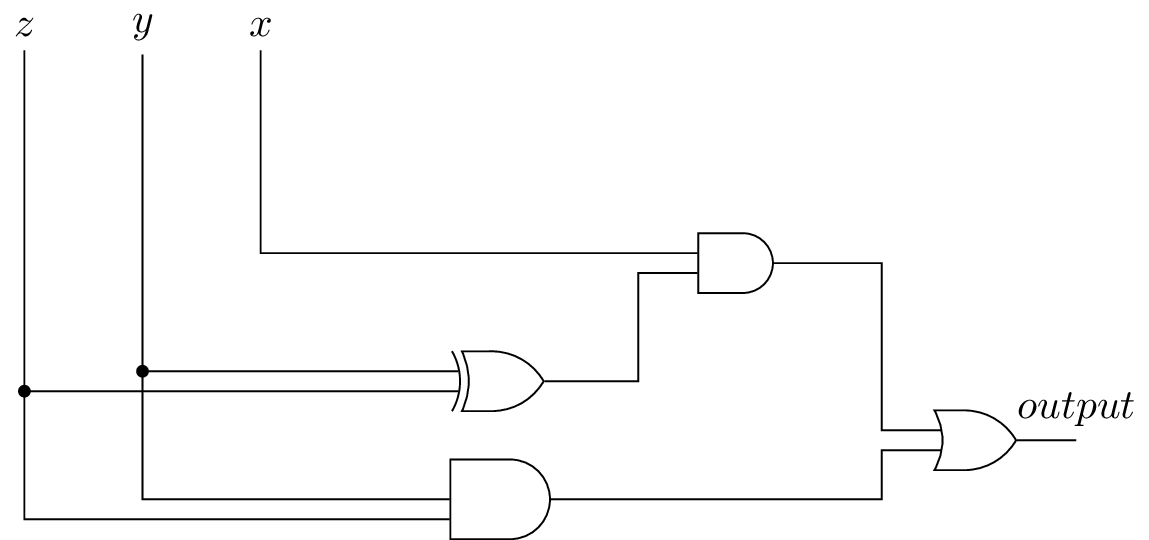

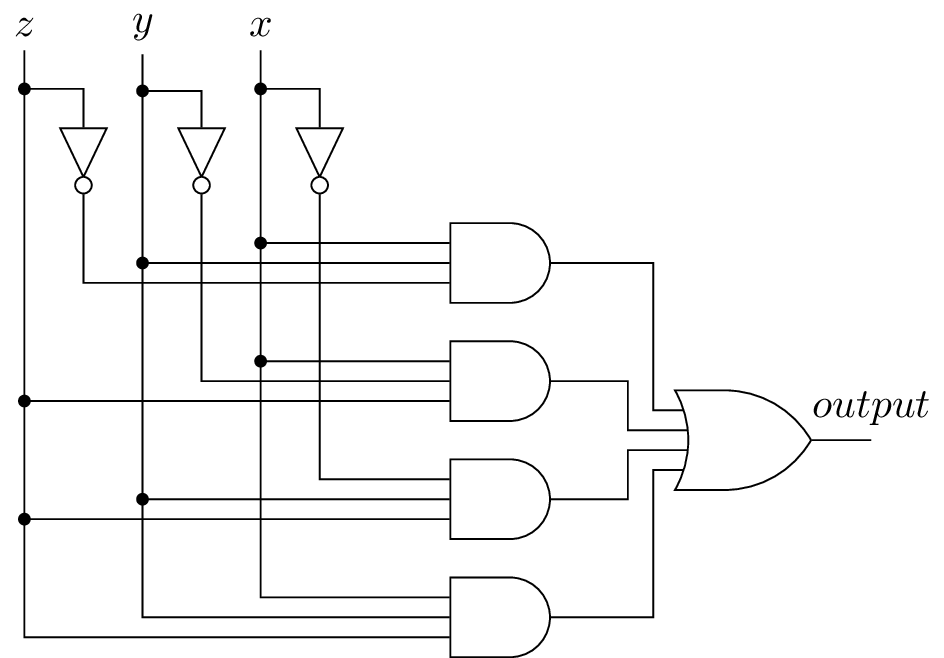

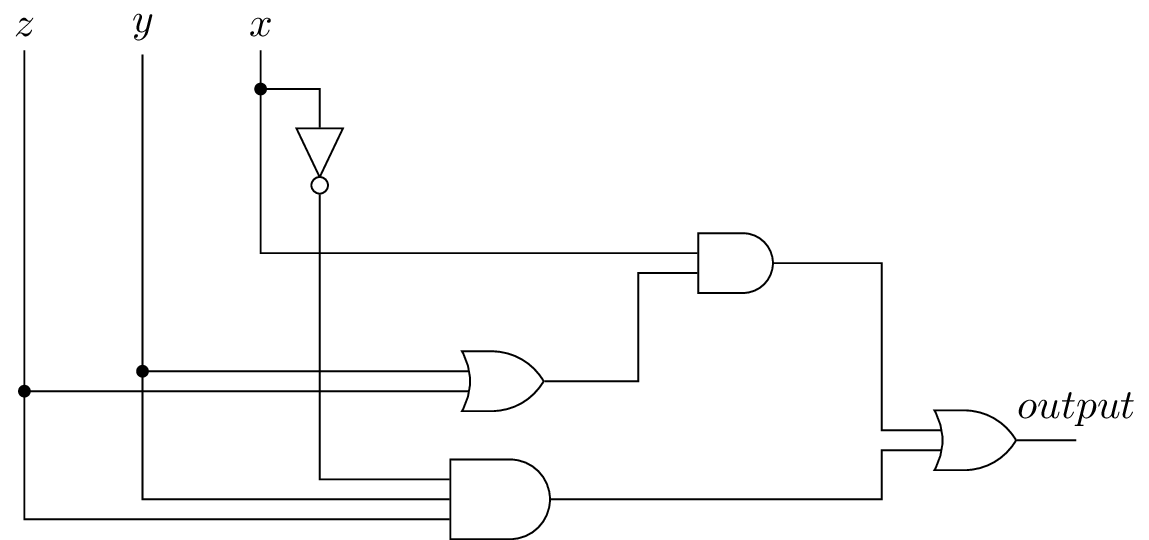

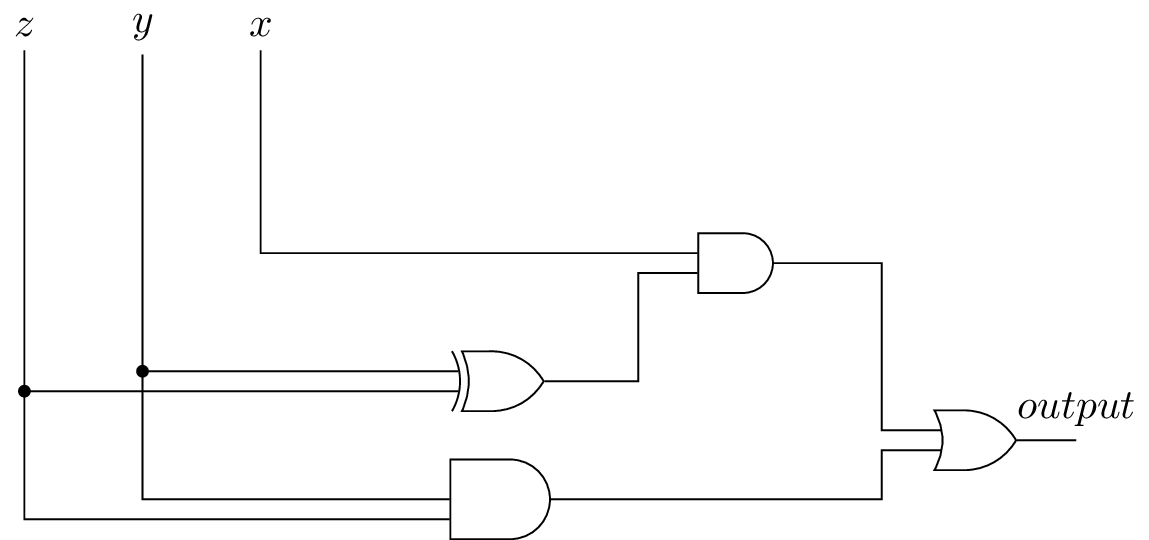

Digital circuits are made up of logic gates. You will probably have seen this in CS 251.

Example 5.7. Suppose the Feds Waterfowl Committee (which I've been told is a real committee), tasked with evaluating the design of a goose mascot for Feds, is made up of three members. For each mascot design they screen, each member votes either yes or no. A mascot design passes screening if and only if it receives two or more yes votes. More formally, this is called the majority function and we can extend it to an $n$-ary boolean function $M:\{T,F\}^n \to \{T,F\}$ by $$M(x_1,\dots,x_n) = \begin{cases} T & \text{if $\sum_{i=1}^n b(x_i) \geq \frac n 2$}, \\ F & \text{otherwise},\end{cases}$$ where $b:\{T,F\} \to \{0,1\}$ is the function $b(T) = 1$ and $b(F) = 0$. There are a few ways to define such a function. Since in our case, we're only dealing with three inputs, there is a very obvious answer taken by translating the result directly: $$((x \wedge y) \vee ((x \wedge z) \vee (y \wedge z))).$$ Does this really work? We can verify in just a moment.

A more general approach for any boolean function we may hand you is to construct an answer based on the truth table.

| $x$ | $y$ | $z$ | $M(x,y,z)$ | |

|---|---|---|---|---|

| T | T | T | T | |

| T | T | F | T | |

| T | F | T | T | |

| T | F | F | F | |

| F | T | T | T | |

| F | T | F | F | |

| F | F | T | F | |

| F | F | F | F |

Then, by examining the truth table, we can construct a formula by taking conjunctions of the rows for which $M(x,y,z)$ evaluates to $T$. As you may suspect, there is more than one way to do this:

Clearly, since these are all derived from the same truth table, they should be logically equivalent, but you can always check. And the formula from before? We can check that it's equivalent to one of these formulas too.

Gates also play an interesting role in theoretical computer science, particularly in the area of circuit complexity, where we can measure the complexity of computational problems in terms of the size of circuits. And even fancier logic gates can be found in quantum circuit complexity, where the results of gates need not be boolean values.

Related to circuit design is the question of just how many connectives we need. In the design and manufacture of integrated circuits, it's often desirable to use as few different types of connectives as possible. If we can define a large number of connectives with a few different types of connectives, then we can fit more connectives into the circuit and simply program them when we need a particular connective that's not in the basic design.

For instance, we obviously don't need $\oplus$ or $\leftrightarrow$, since we've shown that they can be expressed as a combination of other connectives. Even $\rightarrow$ is not really needed, since we've shown that $(p \rightarrow q) \equiv ((\neg p) \vee q)$. We say that $\rightarrow$ is definable in terms of $\neg$ and $\vee$. Strictly speaking, we can have as many as sixteen different binary connectives and it's clear that we don't need all of them. But how far can we take this idea?

Definition 5.8. A set $\mathcal C$ of connectives is called adequate if and only if any $n$-ary connective is definable in terms of operations in $\mathcal C$.

We can show that the set of connectives we use in this class, $\{\wedge, \vee, \neg, \rightarrow\}$ is adequate. However, from before, you may already guess that we can come up with an even smaller adequate set.

Theorem 5.9. The set $\{\wedge, \vee, \neg\}$ is an adequate set of connectives.

The proof of this is fairly straightforward if we assume that our set $\{\wedge, \vee, \neg, \rightarrow\}$ is adequate.

Things become a bit less straightforward if we don't assume this, but still easily doable. Essentially, we have the following algorithm to represent any $n$-ary function $f:\{T,F\}^n \to \{T,F\}$. Let $p_1,\dots,p_n$ denote the arguments to $f$. Consider the truth table for $f$.

This turns out to be similar to what we did when trying to construct a digital circuit.

Now, three connectives is pretty good. Can we do better?

Theorem 5.10. The sets $\{\wedge, \neg\}$, $\{\vee,\neg\}$, $\{\rightarrow,\neg\}$ are adequate sets.

There are a few ways to prove this. One can do this from first principles. However, we can also observe that by Theorem 5., since $\{\wedge,\vee,\neg\}$ is adequate, it remains to show that we can define the other connectives in terms of the ones in our set. Then by making use of De Morgan's laws and remembering that $\rightarrow$ is definiable in terms of $\neg$ and $\vee$, we can get our results.

Are there sets that aren't adequate? It seems pretty clear that, say, $\{\wedge\}$ isn't an adequate set. However, we can show that there are slightly less obvious sets.

Theorem 5.11. The set $\{\wedge, \vee\}$ is not an adequate set of connectives.

The proof of this fact comes from the observation that for any formula which uses $\wedge$ and $\vee$ as connectives, under a valuation which makes every variable true, the formula is true. This would mean that $\neg$ is not definable by $\{\wedge, \vee\}$.

Does this mean that an adequate set of size two is the best we can do? It turns out the answer is no...