Now, strictly speaking, we haven't really defined very much. What we've discussed so far are really the symbols used in the language of predicate logic and a bit about how we might interpret them for context. If were were being strict about it, we should have defined what our symbols are and how to put them together and the question of what these things actually mean gets formally defined as the semantics of our language. As you may suspect, the semantics of predicate logic are quite a bit more complicated than what we've done with propositional logic. However, since there's so many new symbols to deal with, it helps to talk about what these things are supposed to be informally, since it'll be another week or so before we start formally defining the meaning of these symbols.

So far, we have seen seven types of symbols:

Example 12.1. To reiterate that what we have defined are just symbols, we can put together an alien-looking structure consisting of

In other words, we must always keep in mind that symbols have no particular meaning until we define what they mean. This will come later and more formally when we discuss semantics.

We need to be a bit careful now that we have introduced all of this machinery. In propositional logic, the well-formedness of a formula was fairly straightforward and once we settled what a formula could be, we could rest assured that the only things we would be dealing with were ultimately formulas. Even propositional variables were formulas. With the introduction of objects and functions, we now have to consider expressions that may not necessarily have a truth value associated with it semantically. We need to distinguish these two possible types of expressions: those talking about things in our domain and those which may carry a truth value.

Recall again that so far, we have symbols and we have no rules about how to put them together. We need to think back to the days before we had well-formed formulas and contend with these strings of symbols called expressions. Now, we need a well-defined way to tell us how to put our new symbols together into strings of symbols that can potentially mean something.

Informally, an expression that corresponds to a thing in our domain is called a term.

Definition 12.2. A term is

As a side note, our definition of a function symbol requires an arity of at least 1. However, an interesting question to think about is whether it makes sense to consider functions of arity 0.

Remember that at this point, we are back to talking about syntax. So a term is a syntactic construct. Note that here, we define how the function symbol is used. The concept of a function gives meaning to the function symbol, but, as you might suspect, we will get to this when we start talking about semantics.

Just like in propositional logic, those expressions that we intend to have a truth value are called formulas.

Definition 12.3. A formula is

Again, we are careful to note that we are concerned with predicate symbols at this point.

Just like with propositional formulas, we can show that this definition gives us a well-formed formula and that we can construct a parse tree from it.

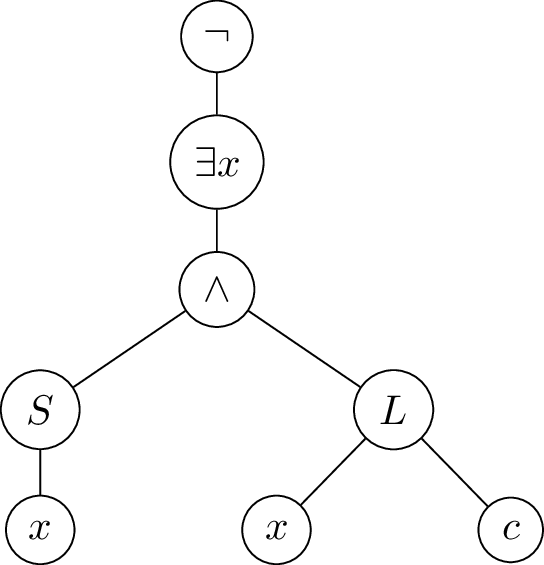

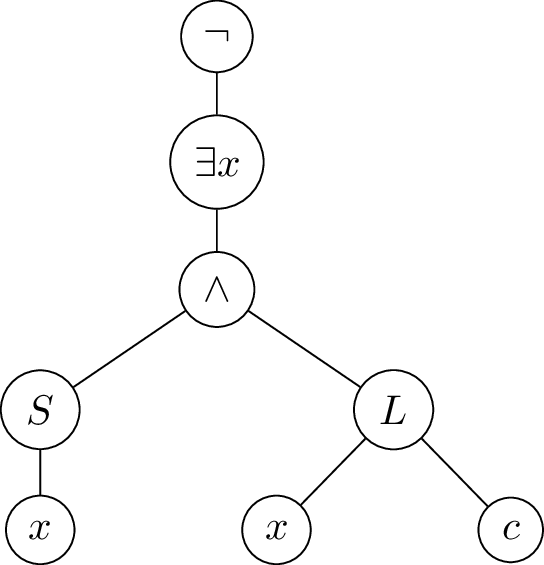

Example 12.4. Recall from last class, we considered the statement "No students love CS 245". Below is the parse tree for the formula $(\neg(\exists x(S(x) \wedge (L(x,c)))))$.

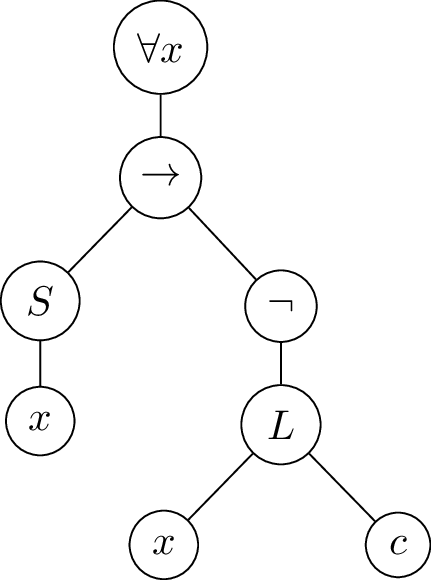

And here is the parse tree for $(\forall x (S(x) \rightarrow (\neg L(x,c))))$.

This looks much more complicated than our trees from propositional logic, since we have many more symbols to consider. Where before propositional variables made up the leaves of the tree, we now have atomic terms. Before, we only needed to consider a handful of connectives, but now we need to consider predicate and function symbols. An $n$-ary predicate or function symbol will have $n$ children. Finally, we have quantifiers, which will have a single child in the tree.

Because we have so many new things to deal with, the parse tree provides a nice visual way to capture a lot of the things that we need to consider. For instance, there's a bit more to consider when working with variables.

Recall that variables are meant to be placeholders for elements of the domain. Ideally, we would like to have a formula and plug in values from our domain and have the formula still mean the same thing. Unfortunately, things are not quite so simple. Let's consider the following example.

int f (int x) {

int z = 78;

return x + y + z;

}

As already mentioned, and as you are probably familiar with already, variables are governed by scope. In the code example we have, we see that x and z are what we consider to be bound, either by the parameter or by the declaration. But what about y?

Informally, we can consider an occurrence of a variable $x$ in a formula to be free if there is no $\forall x$ or $\exists x$ on the leaf-to-root path from that occurrence in the formula's parse tree. Consider the formula

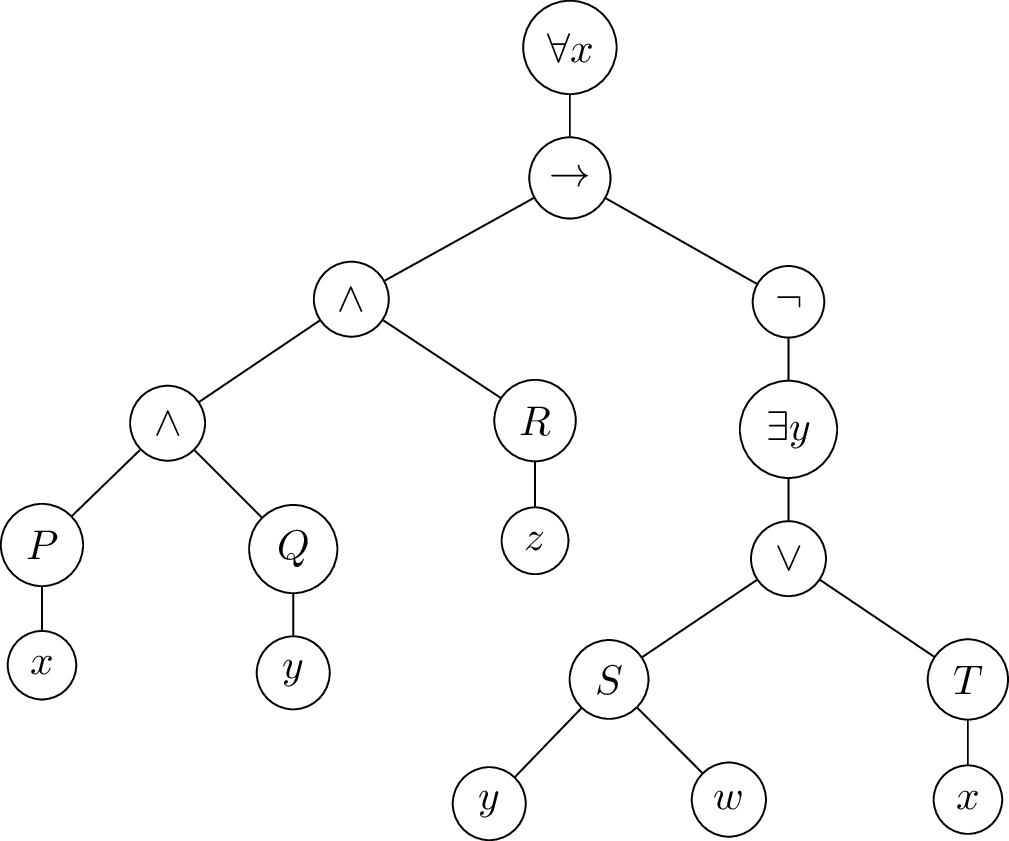

$$(\forall x(((P(x) \wedge Q(y)) \wedge R(z)) \rightarrow (\neg (\exists y(S(y,w) \vee T(x)))))).$$

In this formula, all occurrences of $x$ are bound, while all occurrences of $w$ and $z$ are free. However, the first occurrence of $y$ is free, while the second occurrence of $y$ is bound. In other words, a variable that isn't bound is free. We can see this more clearly if we look at its parse tree.

We can define the notion of a free variable more formally, recurisvely on the definition of a formula.

Definition 12.5. We describe the set of free variables $FV(\varphi)$ in a formula $\varphi$:

A formula with no free variables is called a closed formula or a sentence.

The notion of free and bound variables causes a bit of trouble if we're not careful in working with them. For instance, if we have a formula that looks like $$(\exists x(Q(x,y) \wedge ((\forall xP(x)) \vee (\exists x R(x,y))))).$$ In the above formula, each occurrence of the variable $x$ is bound to a different quantifier. This is analogous to our understanding of how scope and binding works in programming. In fact, these notions that we see in programming are based on the formal logic ideas.

This becomes important once we want to think about how to interpret these formulas. For instance, a formula $$(\forall x (\exists y(P(x,y) \rightarrow Q(x,y))))$$ should be equivalent to $$(\forall u (\exists u(P(u,v) \rightarrow Q(u,v)))).$$ since, intuitively, simply giving the variables a different name shouldn't change the meaning of the formula. However, we have to be more careful when we're considering free variables. Since we don't know what they mean, we can't rename them as we wish. Consider the formula $(\forall x(P(x,y,z)))$. We could end up with formulas like $$(\forall x P(x,u,v)) \quad (\forall x P(x,w,w)) \quad (\forall x P(x,y,x)).$$

This leads us to the necessity of formally defining the notion of substitution.