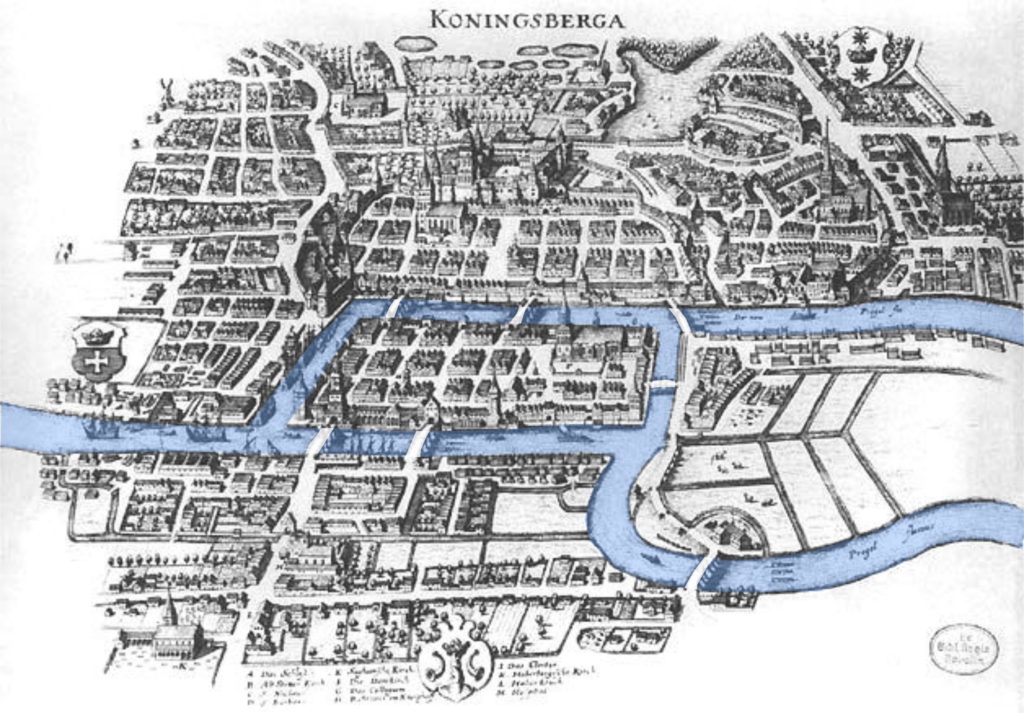

We can play around with the notions of paths and cycles a bit more. One of the first questions of this kind was asked by Euler concerning the bridges of Königsberg. The city was connected by a bunch of bridges over the rivers that passed through it. The question was: is there a way to walk through the city so that you only go across every bridge exactly once?

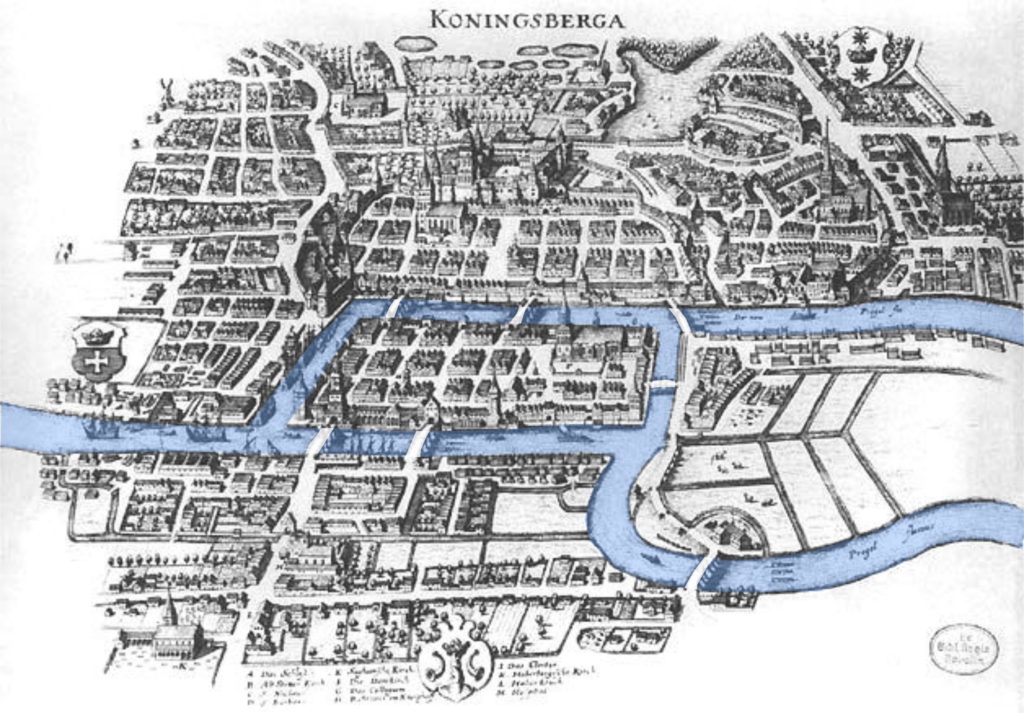

If we reframe this question, we can see that it's a graph question, where the bridges act as edges. Of course, this is a multigraph which is not what we're interested in, but we can ask the same question of the simple graph version of this graph.

Let $G$ be a graph. An Eulerian tour is a closed walk that contains every edge of $G$. An Eulerian path is a path that contains every edge of $G$.

The following, due to Euler, gives us necessary and sufficient conditions for an Eulerian tour to exist in our graph.

A graph $G = (V,E)$ contains an Eulerian tour if and only if every vertex has even degree.

First, suppose $G$ contains an Eulerian cycle. Consider a walk going through a vertex. The walk must traverse two edges incident to this vertex: one going in, and one going out. So for any vertex in the interior of our walk, we need to have traversed an even number of edges, and therefore the vertex will have even degree. This leaves the starting vertex. However, our walk has to end at the starting vertex, so that gives us our even degree.

Now, for the other direction, we assume that every vertex of $G$ has even degree and choose a walk of maximal length. This walk has to be a cycle. If not, the first and last vertices of the walk have an odd number of edges that were traversed, so there must be an unused edge incident that we can add to our walk. Suppose for contradiction that our walk doesn't contain every edge in $G$. Then we can choose a vertex where we have an unused edge incident to it. Now, we construct a new walk that doesn't contain any edges of our original walk and this must also be a cycle for the same reason as above. We can then join the two walks together to form a larger walk, which contradicts our assumption that our walk was maximal.

Euler's result holds for multigraphs, since the original Königsberg bridges problem was formulated for a multigraph.

However, 1736 was a long time ago and Königsberg has changed since. For one thing, it's now Kaliningrad, which is the part of the Russian Federation that's not connected to the rest of it. The bridges have changed too: there are only five bridges connecting the different parts of the city. Luckily for us, this is no longer a multigraph.

However, examining the graph, we see that the modern arrangement of the bridges of Kaliningrad still doesn't have the Euler condition, and so we can conclude that there still is no Eulerian tour through the city's bridges.

One question we can ask is how to find an Eulerian tour if we know our graph has one. The proof above actually gives us one! The algorithm is due to Carl Hierholzer in 1873. The algorithm on a graph $G = (V,E)$ is as follows:

There are two crucial properties that we rely on from Euler's proof above. The first is that we can't get stuck, since every vertex has even degree. The second is that we can make a larger tour by combining two cycles and we can do this until we exhaust every edge.

A quick analysis tells us that this algorithm takes about $O(|E|)$ time, or linear in the number of edges: we ever traverse any edge only once.

We can ask the same kind of questions but for vertices instead of edges. This question is due to William Rowan Hamilton. Hamilton was an Irish mathematician who invented the icosian game, which involves trying to find what we'll describe as a Hamiltonian cycle along the edges of a dodecahedron.

Let $G$ be a graph. A cycle is a Hamiltonian cycle if every vertex of $G$ is in the cycle. A path is a Hamiltonian path if every vertex of $G$ is in the path.

The usual binary encoding of a number interprets each digit as a power of 2. Suppose we're interested in counting something and so we would proceed by counting using three bits in the following way 000, 001, 010, 011, 100, 101, 110, 111. Generally, this is not a problem because we think of these abstractly. However, it's worth thinking about the physical implementation of such a system.

Here, the big question is when we go from something like 011 to 100: how do we guarantee that the system perfectly switches every bit at the same time? The answer is that we really can't. For physical systems, you can imagine that this is not feasible, but even for electronic systems, this is not always possible. This is also a big problem if the switching of bits is expensive, as in quantum systems.

To deal with this, we need an encoding of numbers based on the idea that only one bit is changed at a time. These are called Gray codes, after Frank Gray who defined them in the late 1940s.

Gray codes have a lot of interesting mathematical properties, but we'll focus on graph theory. We can ask the question of whether we can construct a Gray code for all $n$. It seems obvious that we can get one for $n = 2$ and with some work, we can get $n = 3$. Is there a better way to do this than just by trial and error?

Recall that $Q_n$ is the graph of $n$-bit strings which are joined by edges if they differ in exactly one position. This sounds suspiciously like a Gray code. Then our question becomes: is there a path through the $Q_n$ that hits every vertex exactly once? If there is, this gives us our Gray code.

For every integer $n \geq 2$, the $n$-cube $Q_n$ has a Hamiltonian cycle.

We will prove this by induction on $n$.

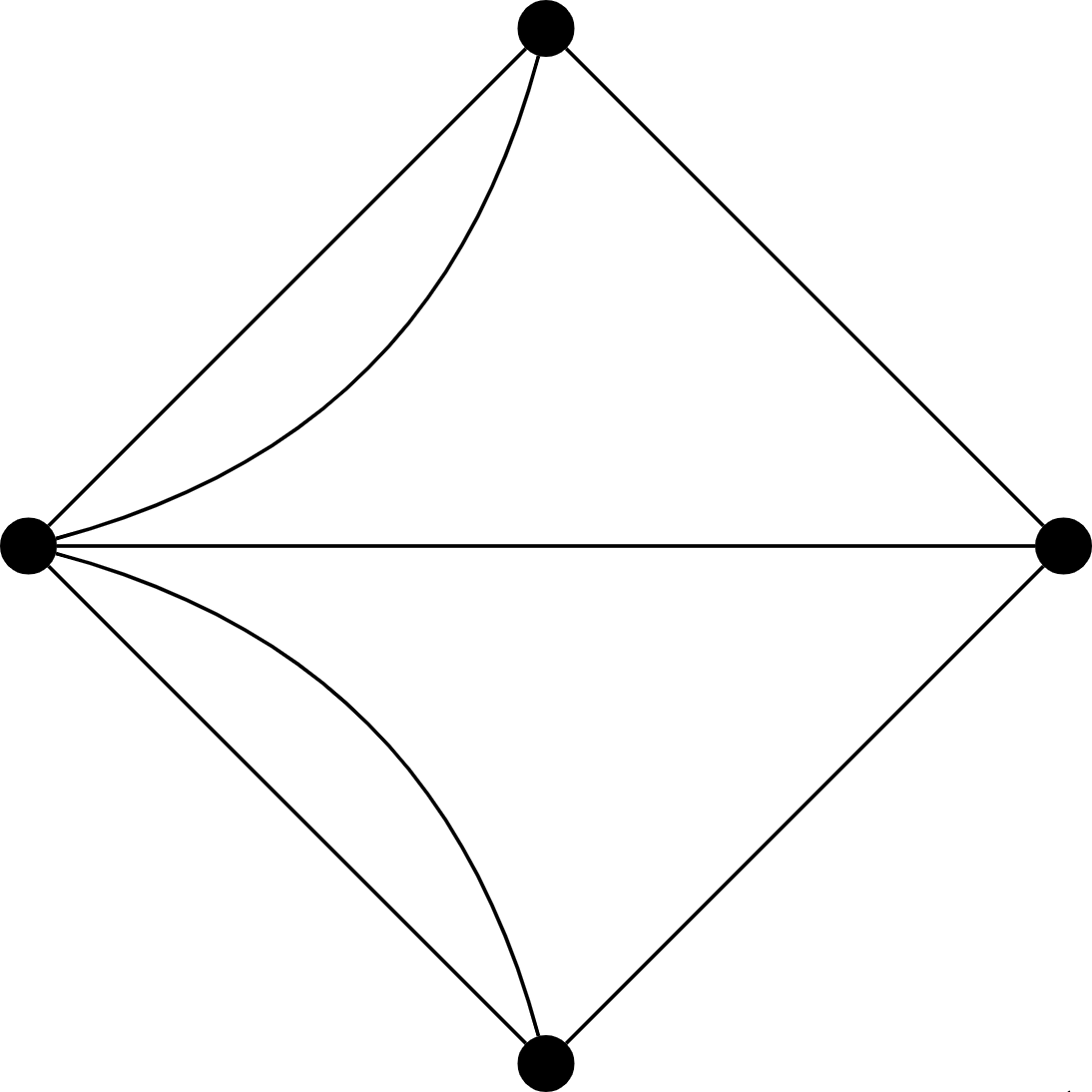

Base case. We have $n = 2$. Then our graph looks like this.

There is clearly a Hamiltonian cycle in this graph.

Inductive case. Let $k \geq 2$ and assume that $Q_n$ contains a Hamiltonian cycle. Now, consider the graph $Q_{n+1}$.

We partition our vertex set into two sets, with one set consisting of strings that begin with 0 and the other with strings that begin with 1. We have \begin{matrix} V_0 = \{0x \mid x \in \{0,1\}^k\}, & V_1 = \{1x \mid x \in \{0,1\}^k\}. \end{matrix} We observe that the subgraph induced by both of these sets is isomorphic to the $k$-cube, $Q_k$, since their vertex sets are really just binary strings of length $k$. Then by our inductive hypothesis, both of these subgraphs have a Hamiltonian cycle. Let $C = v_1 v_2 \cdots v_{2^k} v_1$ be a Hamiltonian cycle for $Q_k$. In other words, $v_1, \dots, v_{2^k}$ are strings of length $k$. Then we have the Hamiltonian cycles $C_0 = 0v_1, 0v_2, \dots, 0v_{2^k}, 0v_1$ in the subgraph induced by $V_0$ and $C_1 = 1v_1, 1v_2, \dots, 1v_{2^k}, 1v_1$ in the subgraph induced by $V_1$.

Then a Hamiltonian cycle for $Q_{k+1}$ is $$0v_1, 0v_2, \dots, 0v_{2^k}, 1v_{2^k}, 1v_{2^k-1}, \dots, 1v_2, 1v_1, 0v_1.$$ In other words, we remove the final edges $C_0$ and $C_1$ so that they are paths. Then we add the edge $(0v_{2^k}, 1v_{2^k})$ to the cycle. This edge exists, because these two strings differ only in the first position. We then add the edge $(1v_1, 0v_1)$ and again these two strings differ only in the first position. Then our cycle is traversed by following $C_0$, then entering $C_1$ and traversing $C_1$ in reverse.

This proof actually shows us a way to construct an $n$-bit Gray code. First, we take an $n-1$-bit Gray code. We append a 0 to the front of the $n-1$-bit Gray code and append a 1 to the front of the $n-1$-bit Gray code. Then we traverse the $n-1$-bit Gray code with the 0 at the beginning in its original order followed by the $n-1$-bit Gray code with the 1 at the beginning in reverse. It's because of this that the Gray code is also called a reflected code.

Just like with Eulerian cycles and paths, we can ask what conditions are necessary for a graph to contain a Hamiltonian path or cycle. Unlike Eulerian cycles and paths, we know of no necessary conditions for Hamiltonian cycles or paths, only sufficient ones. The following results are known, but we will not prove them.

Let $G = (V,E)$. If $\deg(v) \geq \frac{|V|}2$ for all $v \in V$, then there exists a Hamiltonian cycle.

Let $G = (V,E)$ with $|V| \geq 3$. If for all pairs of nonadjacent vertices $u,v \in V$, $\deg(u) + \deg(v) \geq |V|$, then $G$ has a Hamiltonian cycle.

Basically, these two results say that as long as you have enough edges, there will be a Hamiltonian path. But, as mentioned, if a graph does have a Hamiltonian cycle, it doesn't mean that it will satisfy the conditions in these theorems.

This gives us hints that the difficulty of finding Eulerian and Hamiltonian cycles/paths might also be different. And this is true: we already saw that there is a (very) efficient algorithm for finding Eulerian tours. However, there isn't any known efficient algorithm for Hamiltonian cycles/paths; if there were, we could solve the Traveling Salesman Problem efficiently too. This might be a bit surprising, but it serves as a preview of an important point of theoretical computer science: very similar-looking problems can be quite different in terms of their difficulty.

Here is a reformulation of Ore's theorem.

Let $G = (V,E)$ be a graph with $|V| \geq 3$ and let $u,v \in V$ be such that $\deg(u) + \deg(v) \geq n$. Then $G$ contains a Hamiltonian cycle if and only if $G \cup (u,v)$ contains a Hamiltonian cycle.

If $(u,v) \in E$, then we're done. So suppose $(u,v) \not \in E$. The if case is easy, so consider the only if direction. That is, suppose $G \cup (u,v)$ has a Hamiltonian cycle $C$. We want to find a Hamiltonian cycle in $G$. If $(u,v)$ isn't in $C$, then we're done, so suppose $(u,v)$ is in $C$. Let $u = v_1$ and $v = v_n$. This means that $C = u v_2 \cdots v_{n-1} v u$. Note that $u v_2 \cdots v_{n-1} v$ is a Hamiltonian path.

We claim that there exists a vertex $v_i$ in the path such that $(u,v_i) \in E$ and $(v_{i-1}, v) \in E$. Otherwise, $\deg(v) \lt n - \deg(u)$, which is a contradiction. Then we can construct the Hamiltonian cycle $u v_{i-1} v v_{n-1} \cdots v_{i+1} v_i u$.

Recall that showing a graph is 2-colourable amounted to dividing the vertices up into two sets (or a partition) so that all of the edges of the graph only travel between these two sets. Such a graph is a bipartite graph. Bipartite graphs come up naturally in a lot of applications because a bipartite graph models relationships between two groups of things: job applicants and potential employers, PhD students and PhD advisors, pigeons and pigeonholes, etc. In many of these applications, we would like to assign or match an object from the first set with an object from the second. This leads to the following notion.

A matching in a graph $G = (V,E)$ is a subset of edges $M \subseteq E$ such that no two edges in $E$ share the same incident vertex. A vertex that is incident to an edge in a matching $M$ is matched in $M$; otherwise, it is unmatched. A maximal matching is a matching that cannot be made larger; in other words, every edge in $E \setminus M$ is incident to a vertex matched in $M$. A maximum matching is a matching with the maximum number of edges.

The above definitions distinguish between maximal and maximum matchings. Just because you have a matching that can't be expanded doesn't mean that you necessarily have the largest possible matching!

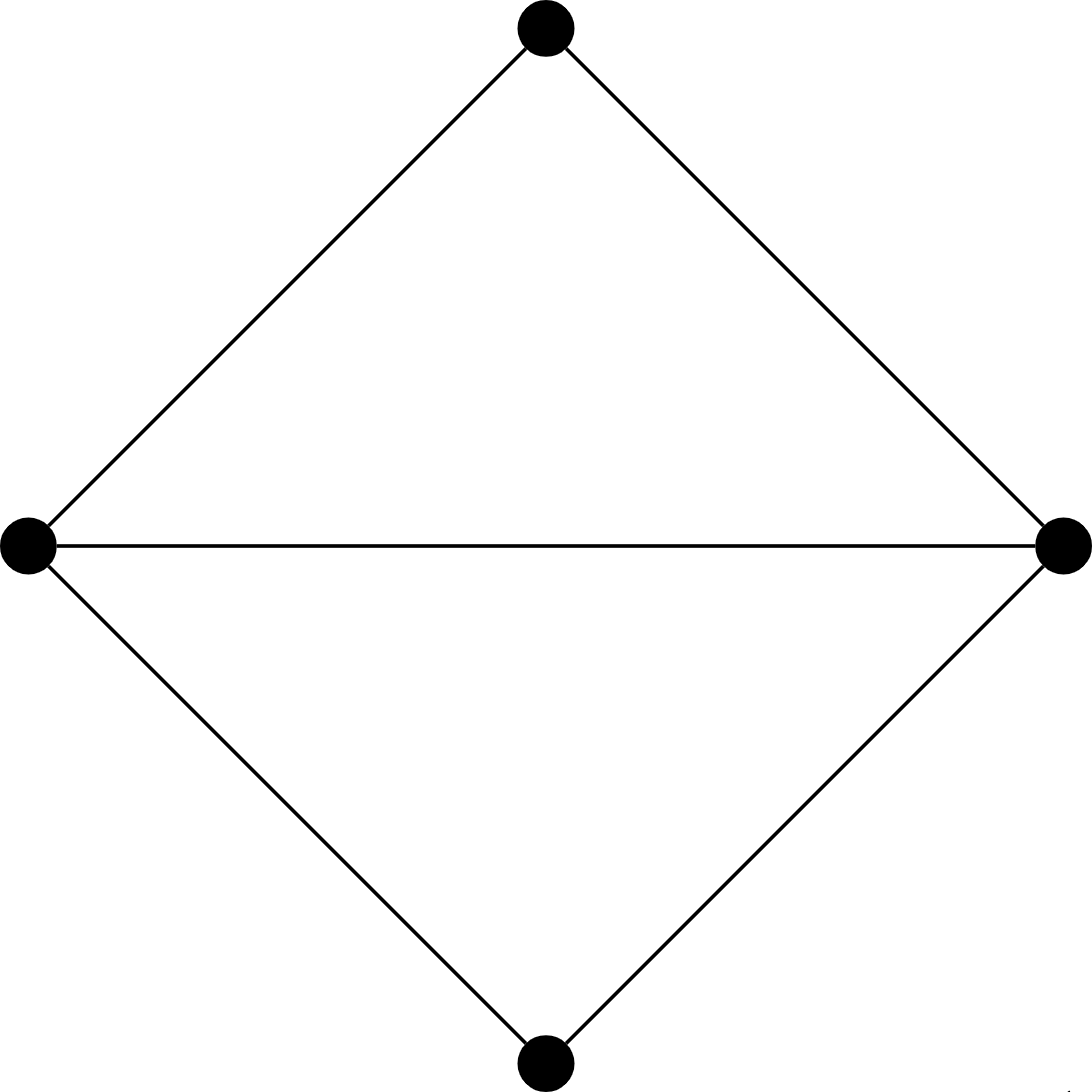

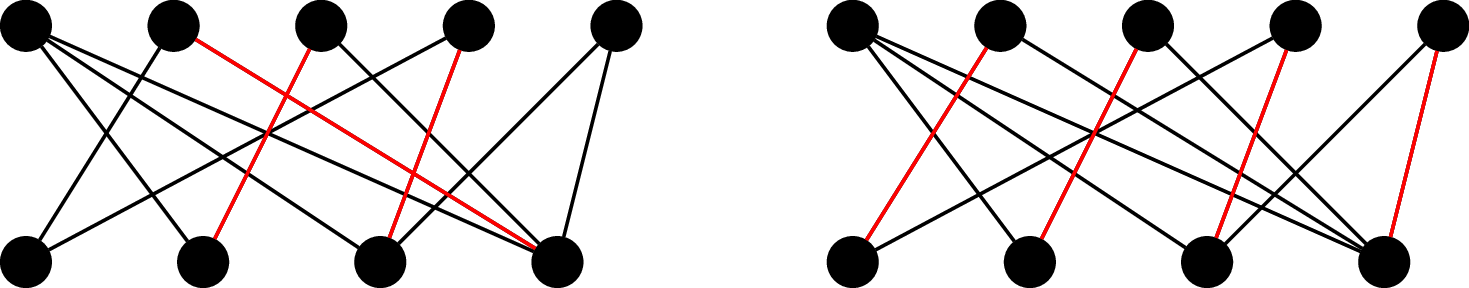

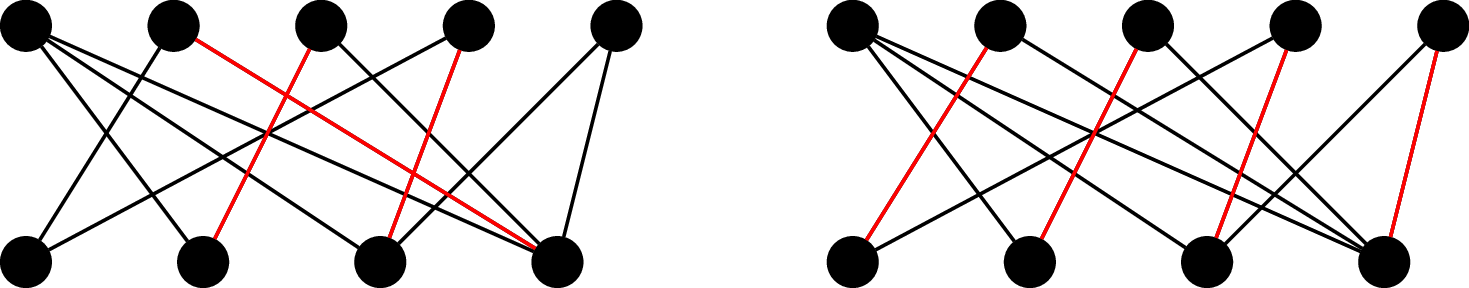

Consider the following graph and the highlighted matchings.

On the left, we have a maximal matching, since no edges can be added to the matching without violating the matching condition. However, this graph can exhibit a larger matching, which we have on the right. Since every vertex on the bottom is matched, this is a complete matching, and therefore, it is also a maximum matching. However, other maximum matchings exist for this graph.

We would like to try to find a maximum matching in our graph. But if we just go in and choose a matching without thinking, we may end up with a maximal matching. Can we be sure that we have a maximum matching and not just a maximal matching? We would like a way to figure out if, when we find ourselves with a maximal matching, whether there's a way to get a better matching or if such a matching exists. We will make use of the following idea.

Let $G$ be a graph with a matching $M$. An augmenting path for $M$ is a path $v_0 v_1 \cdots v_k$ such that $v_0$ and $v_k$ are unmatched in $M$ (i.e. $v_0, v_k$ do not belong to any matched edges) and the edges of the path alternate between edges in $M$ and edges not in $M$.

Let's have a look at what an augmenting path looks like.

Consider again our example:

We can see on the left, where we have a maximal but not maximum matching, there are many augmenting paths, while there are no such paths on the right. Now, observe that we can choose an augmenting path in the left-hand matching and if we flip all the edges in and out of the matching, we end up with the right-hand matching. This gives us a possible algorithm: start with an unmatched vertex and construct a matching iteratively by finding augmenting paths and flipping them.

Let $G = (V,E)$ be a graph with a matching $M$. If $G$ has an $M$-augmenting path, then $M$ is not a maximum matching.

First, we note that an $M$-augmenting path must be odd. Otherwise, the edges on the end can't both be outside of the matching. So suppose $G$ contains an $M$-augmenting path $e_1 e_2 \cdots e_{2k+1}$. By definition, $e_2, e_4, \dots, e_{2k} \in M$ and $e_1, e_3, \dots e_{2k+1} \not \in M$.

We define the matching $M' = (M \setminus \{e_{2i} \mid 1 \leq i \leq k\}) \cup \{e_{2i+1} \mid 0 \leq i \leq k\}$. Note that $M'$ is a matching of $G$ and was constructed by removing $k$ edges from $M$ and adding $k+1$ edges. Thus, $|M'| = |M| + 1 \gt |M|$. So $M$ was not a maximum matching.

In fact, this is one direction for Berge's lemma, due to Claude Berge in 1957, which says that this condition is necessary and sufficient.

Let $G = (V,E)$ be a graph with a matching $M$. Then $M$ is a maximum matching if and only if there is no $M$-augmenting path.

We get a clear algorithm from this result:

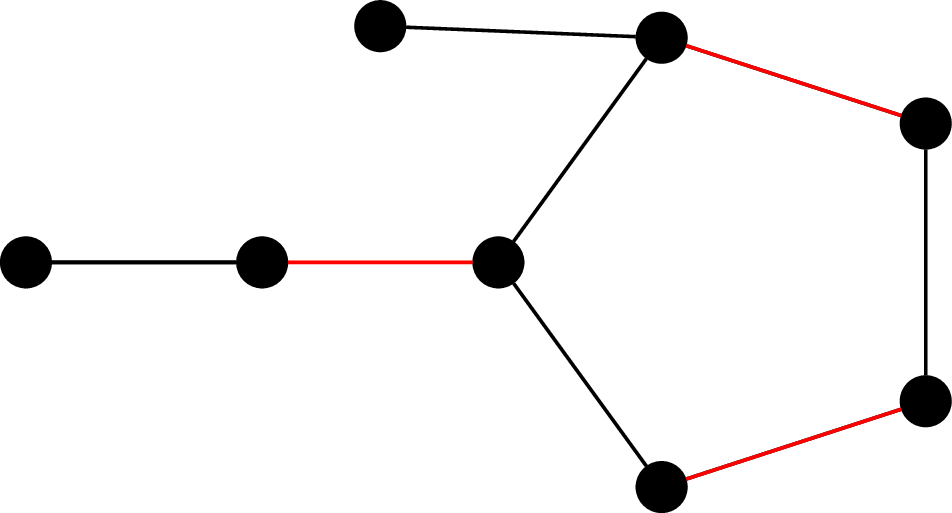

However, while this is true for any matching, simply applying this method as an algorithm runs into one possible complication. Consider the following graph and matching.

Observe that if we attempt to find our augmenting path by starting from the leftmost vertex, we could end up getting stuck, depending on which way we travel on the cycle.

Now, you might say, hold on, this graph is not bipartite! And that's true, this is not a bipartite graph. But, if we look carefully at the definition of matchings and the statement of Berge's lemma, we'll find that neither of these actually depends on the fact that $G$ is bipartite! Luckily, this issue with our algorithm is only a problem for graphs that are not bipartite.

So for bipartite graphs, the algorithm that we've described works perfectly fine. But what about for general graphs? It takes a bit more work, but the same idea works once you can figure out how to deal with odd cycles. This result is due to Jack Edmonds in 1965, who gave the first efficient algorithm for computing maximum matchings in general graphs, and which contributed to the idea of "efficient" algorithms as those with polynomial running times.