What is the mathematics of computer science and data analysis anyway? In short, what we really mean is linear algebra. What is it and why does it underpin the science of data analysis?

Broadly speaking, linear algebra is, simply put, the algebra of linear systems. Historically, linear algebra arose from an exercise you may be familiar with: solving systems of linear equations—these are equations where the variables have degree at most 1, so we don't deal with any squares or cubes or anything complicated like that. \begin{align*} 2x + 7y -5z &= 3 \\ -8x +10z &= -6 \\ - 7y -8z &= 4 \end{align*}

Much of the early theory arose in the 16th and 17th centuries, due to mathematicians like Gauss, Leibniz, Cauchy, and many others.

At some point, we realized that it makes sense to treat such a system as one "thing" and to study it like that. And so mathematicians ask the usual questions about "things": what do these things do, what do they look like, and so on. As with a lot of mathematics, this led to a great number of applications in engineering, physics, chemistry, and all across the sciences. But for a long time, this was limited as much by our imagination as it was by our capability to work with large enough objects.

This changed as soon as we had access to machines that could do some number crunching for us. One of the beautiful things about computer science is how it has revived and revitalized many areas of mathematics. This is not surprising for a machine that is essentially built out of math.

Let's think about what it is that we do when we want to solve a problem using computer science. After all, people get into computer science to "solve real problems" with computers or programming. But something has to happen in order for us to turn our "real problem" into something that is "solved with a computer".

The focus of this course is giving you the mathematical foundations and background to do the first few steps, before even thinking about programming. What does this look like?

Let's think about data and representation. Since you are familiar with Python, we can use this as a starting point. Though it is quite lax with them, Python has a notion of data types. Let's suppose that we want to do something very simple, like represent the size of something—maybe a list or a tree or some other bespoke data structure. What is a reasonable representation for this piece of information?

def __len__(self: Tree) -> ???:

The obvious choice one makes without interrogating deeply is to use an int. We assume that we want to count discrete objects, so it makes sense. Now we can ask what we can do with ints. We can run off a list of things we love to do with integers: add, multiply, subtract? Subtraction can pose a problem: does it make sense to subtract sizes of things? We may end up in a situation where we can end up with a size that is a negative number—does this make sense to allow? This suggests that a more appropriate data type might be something like a natural number and a programming language that is more strict with typing could allow use to properly set up and enforce such restrictions.

What about division? Again, we take for granted that we can divide numbers and nothing goes wrong, but is that really true? Here, Python is quite cavalier with this, in the same way that people tend to be. We go "of course you can divide two integers!", but, as you may have encountered, this can be a problem because what you get when you divide two integers is not an integer. Mathematically speaking, what you're guaranteed to get is a rational number. In Python, you are likely to get a float.

Before jumping into floating point numbers, let's think more on what it means to divide two integers. There is a way to divide using integers that is well-defined and produces integers—we just need to capture the right information. In grade school, we often first approach division not by using fractions or rational numbers, but by considering quotients and remainders. That is, for two integers $m$ and $n$, we can always find integers $q$ and $r$ such that $m = qn + r$, where $q$ is the quotient and $r$ is the remainder, with the restriction that $0 \leq r \lt n$. This function is sometimes called divmod, and in Python, divmod(m,n) produces the tuple (q,r).

>>> x = 13

>>> y = 5

>>> x/y

2.6

>>> type(x/y)

float

>>> divmod(x, y)

(2, 3)

>>> type(divmod(x, y))

tuple

>>> type(divmod(x,y)[0])

int

Okay, then what are floats? When first introduced to floats we tend to think of them as "decimal numbers". This is fine for a bit, but it hides the complexity of the data type. To think of this type more accurately, we have to ask: what do floats represent?

Floating point numbers are intended to represent real numbers—but what are real numbers? We tend to approach real numbers naively, without thinking too deeply about them. This is because thinking about real numbers too deeply can lead to all sorts of questions that kept the mathematicians of the early 20th century up at night.

The simplest way to think about real numbers is that they are any number that has an infinitely long repressentation. Perhaps the most famous of these is $\pi$. But really, any number that we think of as a "decimal" number is a real number. Even a number like $325.47$ has an infinite representation: imagine an unending sequence of 0's following the 7.

This has all sorts of mathematical consequences. For our purposes, it becomes clear what the problem with floating point numbers is. Floats are meant to be finite representations of infinite objects. We have unfortunately not worked out how to build computers that can represent real numbers with infinite precision, so we have to approximate. It is this approximation that creates pitfalls to the unassuming programmer (in addition to the natural complexity that real numbers bring).

All of this is just to say that types tell us more than just the representation of the data, but that they also determine what we can do with them. In some sense, the type of the data drives or prescribes or affords how we work with it.

So far, we've discussed numeric types in a lot of detail. This is because questions like representation are often brushed aside without interrogating very deeply, but these are basic questions that can drive our problem-solving approaches. So now we are ready to think about the next question: what if we want to represent something that has more than one piece of information associated with it?

By now, you've seen all sorts of complex, compound data types: lists, tuples, dictionaries, and even classes. But all of these types assume that we already know something about the data that we're working with. Instead, what we need is a type that captures a large amount of data that is related to each other in some way, but without necessarily knowing how.

The desired data type here is the vector. What is a vector and what can we do with this data type? That is the question that linear algebra is built around.

Mathematics, particularly pure mathematics, is concerned with understanding the world through structures. In some sense, all math is about the structure of some data type.

One of the reasons why data science works is the idea that data is not just statistical. Certainly, that's a big part of it, but the underlying mechanisms that make, say, machine learning work are about the hidden structures in the data. Such structures are algebraic in nature, and so we exploit linear algebra to uncover these structures.

In data science and machine learning, we treat data as a vector or matrix. One view of data science and machine learning then is that it's nothing but searching for patterns by exploiting the structure of the data using linear algebra. Everything sounds pretty easy when you think about it like this. Of course, one can say the same about quantum computing or anything that computers do: they just do a bunch of math.

Our purpose is to understand the how and why of all of this. Of course, with the proliferation of computing power and smart people writing programs, it's easy to wonder what the point in learning the mathematics is. So obviously, we're not learning this stuff so we can whip up our own linear algebra libraries, or even our own machine learning algorithms.

Rather, it's the recognition that when we solve a "real-world" problem using computers, somewhere underneath, after scratching enough layers off, what we're really dealing with is some form of math problem. And there's a bit of a paradox here—understanding how we get to that math problem and what we do with it is not just a mathematical problem.

Giving up control of this process amounts to letting the software engineers make the decisions. This may have been fine ten or fifteen years ago, but half the reason we're here is because we recognize that it isn't enough just to leave it in their hands.

We'll introduce some of the basic objects in linear algebra. The first is the vector.

A vector is an ordered collection of numbers, called scalars.

Because they are so important, we will distinguish vectors visually from other variables. In the notes (and in many texts), they will be set in boldface, so $\mathbf v$ is a vector, while $v$ is not. When writing by hand, we can't exactly write boldface, so we typically draw an arrow over the variable, like $\vec v$.

Unfortunately, this definition that we have is not really a formal or rigourous definition, for reasons we'll eventually see. But for most people, including us, this works well enough. For our purposes, we are dealing with finite-dimensional vectors over the real numbers $\mathbb R$—that is, our scalars are assumed to be real numbers.

One of the great things about math is how a lot of general structures can be set up and used in the same ways with weirder and more complex objects. For example, we can have vectors of complex numbers or vectors of polynomials. We don't need to think about any of this stuff, but it's interesting to think that the same framework has a logic that carries us beyond just plain old real numbers.

So you can think of vectors over real numbers in a few different ways. Traditionally, we can think of a vector as an arrow with one end starting at the origin, with a length and direction. In other words, a vector is an object that's situated in some geometric space. This interpretation is particularly popular in physics.

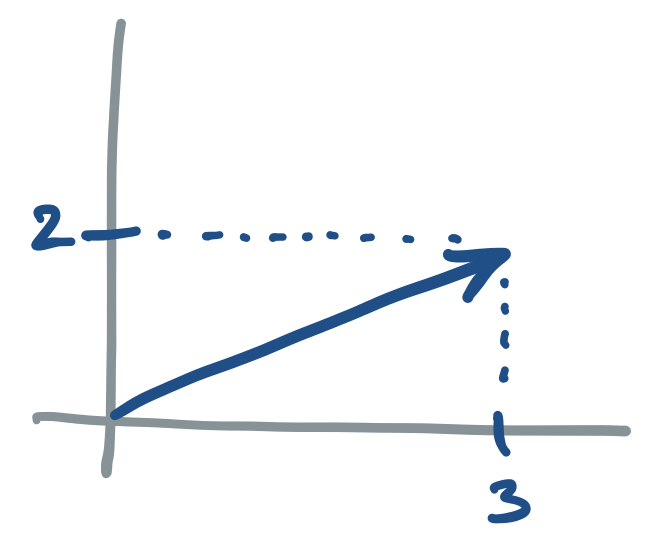

Consider the vector $\begin{bmatrix}3 \\ 2\end{bmatrix}$. We can represent this on a plane as:

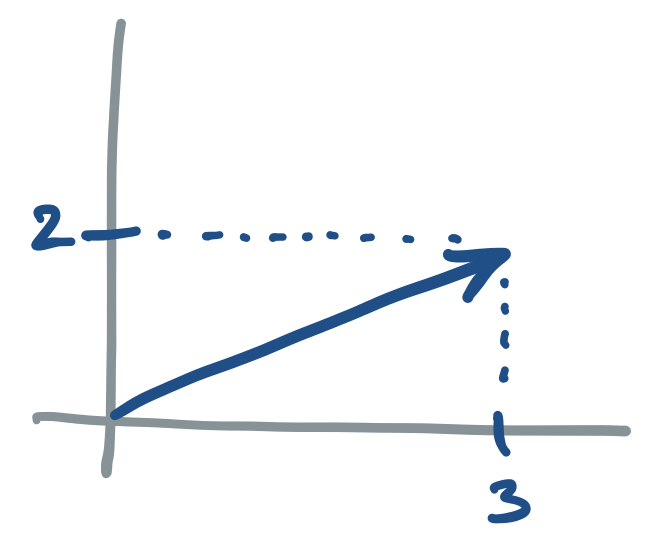

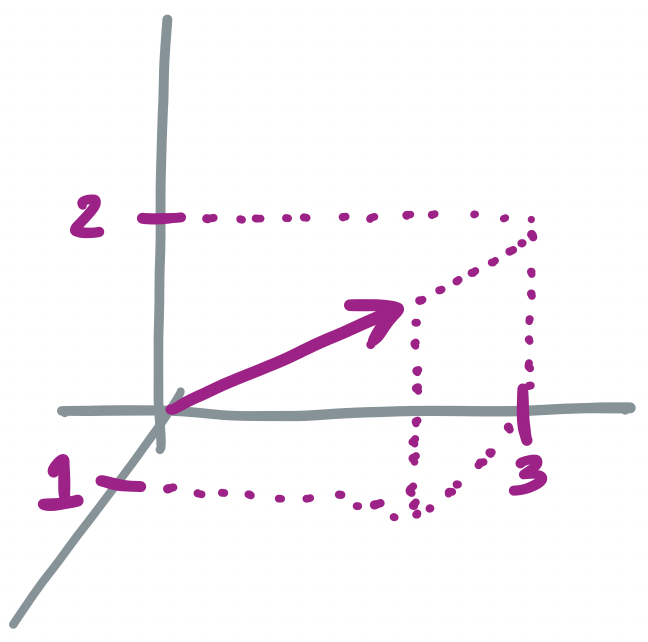

Now, if we have the vector $\begin{bmatrix}3 \\ 2 \\ 1\end{bmatrix}$, we have

We will not try to draw a vector with four components, but you get the idea.

A more abstract understanding of a vector is as an object with several components, kind of like a tuple. One can see how such an object can be used to represent a "real" object, with each component storing a particular piece of information about the object.

Consider the vector $\begin{bmatrix}3 \\ 2\end{bmatrix}$. Here are some possible interpretations of this vector:

But what separates vectors from plain old tuples? The difference is in what we can do with vectors. This distinction is common to both computer science and mathematics.

For example, in some programming languages, lists and strings are almost the same thing. But we'll find that there are operations that we can perform on strings that we can't do on lists, like sort them lexicographically. Simlarly, we discussed the difference between integers and floating point numbers earlier.

This may or may not be obvious, but in mathematics, just as in computer science, operations need to be defined before we can use them. For example, it's not obvious that addition should work on vectors. It certainly doesn't work the same way as with numbers, so we have to be a bit careful.

Let $\mathbf u = \begin{bmatrix} u_1 \\ \vdots \\ u_n\end{bmatrix}$ and $\mathbf v = \begin{bmatrix}v_1 \\ \vdots \\ v_n \end{bmatrix}$. Then we define $\mathbf u + \mathbf v$ by \[\mathbf u + \mathbf v = \begin{bmatrix} u_1 + v_1 \\ \vdots \\ u_n + v_n \end{bmatrix}.\]

In other words, the sum of two vectors $\mathbf u$ and $\mathbf v$ is the vector that has components that are just the sums of the components of $\mathbf u$ and $\mathbf v$.

Consider the vectors $\begin{bmatrix}3 \\ 5\end{bmatrix}$ and $\begin{bmatrix} 7 \\ 2 \end{bmatrix}$. Then $\begin{bmatrix}3 \\ 5 \end{bmatrix} + \begin{bmatrix}7 \\ 2\end{bmatrix} = \begin{bmatrix}10 \\ 7 \end{bmatrix}$.

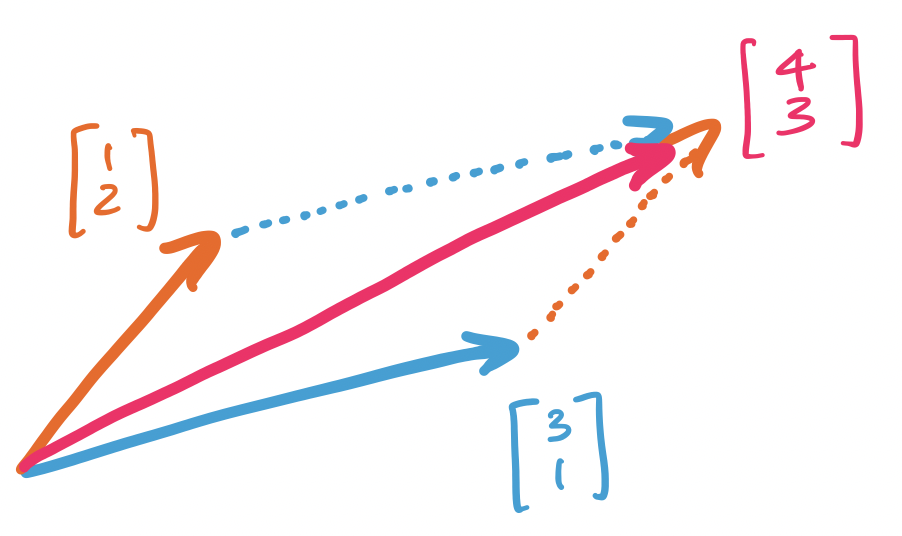

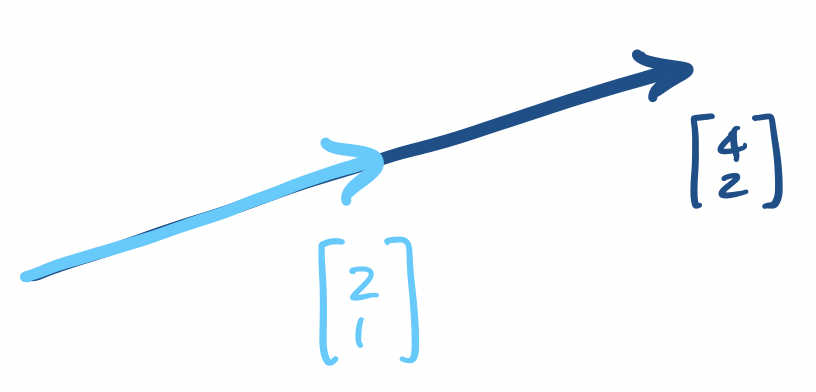

One helpful interpretation of vector addition is to view it geometrically. Suppose we have two vectors, $\begin{bmatrix} 1 \\ 2 \end{bmatrix}$ and $\begin{bmatrix} 3 \\ 1 \end{bmatrix}$. Adding them together gives us $\begin{bmatrix} 4 \\ 3\end{bmatrix}$. However, if we draw this out on the plane, we get the following:

Other than vectors, we have scalars.

A scalar is a real number.

Techncially, scalars are just the things that go in vectors but are not in a vector. Since we've already said we're happy to keep ourselves to real numbers, a scalar is just a real number. However, if we ever wanted to work with, say, complex numbers, then we can have complex scalars.

We typically reserve lowercase (Latin) letters for scalars, though lowercase Greek letters are also a popular choice.

Why are these called scalars? Well, we use scalars to define scalar multiplication.

Let $\mathbf v = \begin{bmatrix}v_1 \\ v_2 \\ \vdots \\ v_n\end{bmatrix}$ be a vector and let $c$ be a scalar. Then we define the scalar multplication of $\mathbf v$ by $c$ by \[c \mathbf v = \begin{bmatrix}cv_1 \\ cv_2 \\ \vdots \\ cv_n\end{bmatrix}.\]

Let $\mathbf u = \begin{bmatrix}4 \\ -2\end{bmatrix}$ and $\mathbf v = \begin{bmatrix} 3 \\ -1 \\ 5 \end{bmatrix}$. Then $3\mathbf u = \begin{bmatrix}12 \\ -6\end{bmatrix}$ and $\frac 1 4 \mathbf v = \begin{bmatrix}\frac 3 4 \\ - \frac 1 4 \\ \frac 5 4 \end{bmatrix}$.

Observe that the effect of scalar multiplication is scaling: the resulting vector has components that are factors of $c$ of the original (i.e. it is scaled by $c$).

Linear algebra is built around these two operations. It's all you need! This tells us something very neat: that the only thing we need to know how to do is add two things together and scale them. Just from these two operations, we get an enormous amount of utility.

Again, we note that the ability to add and scale vectors is what separates them from tuples. Indeed, a formal definition of a vector is an object that allows us to apply these two operations. This means that it's not the case that vectors are just arbitrary ordered collections of items—these items need to be able to be added and scaled.

How do we begin using these? Well, these two operations already give us something very neat: we can create all kinds of vectors by using other vectors and applying these operations to them.

Let $\mathbf u = \begin{bmatrix}1 \\ 3 \end{bmatrix}$ and $\mathbf v = \begin{bmatrix} -5 \\ 2 \end{bmatrix}$. Then $2\mathbf u - 3 \mathbf v = \begin{bmatrix} 17 \\ -3 \end{bmatrix}$.

This is an important idea, so we should call it something.

A vector $\mathbf v$ is a linear combination of vectors $\mathbf u_1, \dots, \mathbf u_n$ if there exist scalars $a_1, \dots, a_n$ such that \[\mathbf v = a_1 \mathbf u_1 + \cdots + a_n \mathbf u_n.\]

In other words, $\mathbf v$ can be expressed as the sum of some vectors after some scaling.

Here, we have an interesting question that crops up. Let's say that we have a bunch of vectors. What other vectors can we make with these? More formally, what is the set of vectors that are linear combinations of our original set of vectors?

These seem like arbitrary questions that are only interesting to mathematicians, and for a long time, you would've been right. However, computer science lets us find clever ways to make use of these questions.

For example, suppose we have a collection of objects. These objects have properties that can be expressed numerically. We can treat such an object as a vector of these properties. And if we have a bunch of these objects, we have a bunch of vectors. These objects have some similarities with each other.