Definition 14.3. Let $G = (V,\Sigma,P,S)$ be a context-free grammar and let $\beta, \gamma \in (V \cup \Sigma)^*$. We say that $\beta$ yields $\gamma$, denoted $\beta \Rightarrow_G \gamma$, if $\beta = x A y$ and $\gamma = x \alpha y$ for any $x,y \in (V \cup \Sigma)^*$ and there is a production $A \to \alpha \in P$.

We can define the $k$-step version of this relation, $\beta \Rightarrow_G^k \gamma$, if there is a sequence of $k$ productions in $P$ that can be applied from $\beta$ to yield $\gamma$. We define this recursively by

Then we define $\beta \Rightarrow_G^* \gamma$ if $\beta \Rightarrow_G^k \gamma$ for some finite $k$. Formally, for $\beta, \gamma \in (V \cup \Sigma)^*$, $\beta \Rightarrow_G^* \gamma$ if there exists a sequence of strings $\alpha_1, \alpha_2, \dots, \alpha_k \in (V \cup \Sigma)^*$ for some $k \geq 1$ such that $\beta = \alpha_1$, $\gamma = \alpha_k$, and $\alpha_{i-1} \Rightarrow_G \alpha_i$ for $2 \leq i \leq k$. Usually, we don't write $G$ if it is clear from context which grammar we're talking about.

In other words, we have $\beta \Rightarrow^* \gamma$ if we can transform $\beta$ into $\gamma$ by applying a finite number of production rules of $G$.

Definition 14.4. Let $G = (V,\Sigma,P,S)$ be a context-free grammar. The language generated by $G$ is $$L(G) = \{w \in \Sigma^* \mid S \Rightarrow_G^* w\}.$$

Definition 14.5. For a context-free grammar $G = (V,\Sigma,P,S)$, if we have $w \in L(G)$ and a sequence of strings $\alpha_1, \dots, \alpha_k \in (V \cup \Sigma)^*$ such that $\alpha_1 = S$, $\alpha_k = w$, and $\alpha_i \Rightarrow_G \alpha_{i+1}$ for $1 \leq i \leq k-1$, then we say that the sequence $\alpha_1, \dots, \alpha_k$ is a derivation of $w$. This definition implies that there is at least one derivation for every word $w \in L(G)$.

Definition 14.6. A language $L$ is a context-free language if there exists a context-free grammar $G$ such that $L(G) = L$.

Unlike for regular languages and finite automata, this is the definition and characterization for context-free languages. One might ask why these are called context-free languages and grammars. The name has to do with how the grammar works: for any production $A \to \alpha$, and any string $\beta A \gamma$, we can always apply the production $A \to \alpha$. The context of $A$, whatever is around $A$, has no bearing on what rule gets applied, only that the variable is present. We'll return to our example from above, the grammar $G$, defined as follows, $$S \to aSb \mid \varepsilon.$$ We claimed that this grammar generates the language $L = \{a^k b^k \mid k \geq 0\}$. So now, we'll prove it.

Proposition 14.7. $L(G) = L$.

Proof. First, suppose that $L \subseteq L(G)$ and consider a word $x \in L$. We will show that $x$ can be generated by $G$ by induction on $|x|$.

We begin with the base case, $|x| = 0$, which means that $x = \varepsilon$. However, $S \to \varepsilon$ is a production in $G$, so $S \Rightarrow x$ and $x \in L(G)$.

Now for our inductive step, we consider $|x| \geq 1$ and thus $x \neq \varepsilon$. For our induction hypothesis, we suppose that for any $w \in L$ with $|w| < |x|$, we have $w \in L(G)$. That is, $S \Rightarrow^* w$.

Since $x \in L$ and $|x| > 0$, we have $x = a^n b^n$ for some $n \geq 1$. Then this means that $w = a^{n-1} b^{n-1} \in L$. Since $|w| < |x|$, we have $w \in L(G)$ by our induction hypothesis and thus there is a derivation $S \Rightarrow^* w$. Then we can use this to derive $x$ by $$S \Rightarrow aSb \Rightarrow^* awb = x.$$ Thus, we have $x \in L(G)$ and $L \subseteq L(G)$ as desired.

Now, we'll show $L(G) \subseteq L$. Let $x \in L(G)$. We will show $x \in L$ by $k$, the number of steps in the derivation of $x$.

For our base case, we have $k = 1$, which means $x = \varepsilon$. Since $\varepsilon \in L$, the base case holds.

Now we consider $k \geq 2$ and assume that every word $w \in L(G)$ with fewer than $k$ steps in its derivation is in $L$. Since $k \geq 2$, the first step in the derivation of $x$ must be $S \to aSb$. Let $w \in L(G)$ be a word that can be derived in fewer than $k$ steps and let $x = awb$. We then have $w \in L$ by our induction hypothesis and thus $w = a^n b^n$ for some $n \geq 0$. Then $x = awb = a(a^n b^n)b = a^{n+1} b^{n+1} \in L$ and $L(G) \subseteq L$ as desired.

Thus, $L = L(G)$. $$\tag*{$\Box$}$$

Here's another example of how to formally prove that a language is generated by a grammar. Let $\Sigma = \{0,1\}$. We say that a word $w \in \Sigma^*$ is balanced if $|w|_0 = |w|_1$. Let $L = \{w \in \Sigma^* \mid |w|_0 = |w|_1 \}$ be the language of binary balanced words. We claim that the grammar $G$, defined by $$S \to 0S1S \mid 1S0S \mid \varepsilon$$ generates $L$.

Proposition 14.8. $L(G) = L$.

Proof. First, we will show that $L(G) \in L$ by induction on the number of steps $k$ in a derivation of a word $w \in L(G)$.

For our base case, we have $k = 1$ and we have $w = \varepsilon \in L$.

Now consider $k \geq 2$ and assume that every word $w' \in L(G)$ with fewer than $k$ steps in its derivation is in $L$. Since $k \geq 2$, we will consider the first step in the derivation of $w$ to be $S \to 0S1S$. Let $u,v \in L(G)$ be words that can be derived in fewer than $k$ steps and let $w = 0u1v$. Then we have $|w|_0 = |u|_0 + |v|_0 + 1$ and $|w|_1 = |u|_1 + |v|_1 + 1$. But $u,v \in L$ by our induction hypothesis, so we have $|u|_0 = |u|_1$ and $|v|_0 = |v|_1$, giving us $|w|_0 = |w|_1$. A similar argument can be made when taking $S \to 1S0S$ as the first step of the derivation. Therefore, we have $w \in L$ and $L(G) \subseteq L$ as desired.

Next, we will show that $L \subseteq L(G)$. Let $w \in L$. We will show this by induction on $n = |w|$.

For the base case, we have $n = 0$ and $w = \varepsilon \in L(G)$ by $S \Rightarrow \varepsilon$.

Now let $n \geq 1$ and assume that for all strings $w' \in L$ with $|w| < n$, we have $w' \in L(G)$. Let $w = a_1 \cdots a_n$, where $a_i \in \Sigma$ for $1 \leq i \leq n$. Since $w \in L$, we have $$|a_1 \cdots a_n|_0 = |a_1 \cdots a_n|_1.$$

Let $m$ be the minimum value such that $$|a_1 \cdots a_m|_0 = |a_1 \cdots a_m|_1.$$

In other words, $a_1 \cdots a_m$ is the shortest prefix of $w$ that is still balanced. We will show that $a_1 \neq a_m$. Assume to the contrary that $a_1 = a_m$. We define $$d_k = |a_1 \cdots a_k|_1 - |a_1 \cdots a_k|_0$$ for all $1 \leq k \leq m$. From above, we must have $d_m = 0$. Then, we have \begin{align*} |a_1 \cdots a_m|_1 &= |a_1 \cdots a_{m-1}|_1 + |a_m|_1 = |a_1 \cdots a_{m-1}|_1 + |a_1|_1 \\ |a_1 \cdots a_m|_0 &= |a_1 \cdots a_{m-1}|_0 + |a_m|_0 = |a_1 \cdots a_{m-1}|_0 + |a_1|_0 \end{align*} and by subtracting these two equations, we have $d_m = d_{m-1} + d_1$. Observe that $d_m = 0$ and $d_1 \neq 0$, and therefore we have $d_{m-1} \neq 0$ and that $d_{m-1}$ and $d_1$ must have opposite sign. But note also that consecutive values of $d_k$ differ by only 1. Since $d_1$ and $d_{m-1}$ have opposite sign, this must mean there exists $k$ with $2 \leq k \leq m-2$ such that $d_k = 0$, contradicting our assumed minimality of $m$. Thus, $a_1 \neq a_m$.

This will allow us to construct our derivation. Since $$|a_1 \cdots a_m|_0 = |a_1 \cdots a_m|_1$$ and $a_1 \neq a_m$, we have $u = a_2 \cdots a_{m-1}$ and $v = a_{m+1} \cdots a_n$ such that $|u|_0 = |u|_1$ and $|v|_0 = |v|_1$. Since $|u|,|v| < n$, we have $u,v \in L(G)$ and therefore $S \Rightarrow^* u$ and $S \Rightarrow^* v$. Then we have the following possibile derivations for $w$, \begin{align*} S &\Rightarrow 0S1S \Rightarrow^* 0u1v = w, \\ S &\Rightarrow 1S0S \Rightarrow^* 1u0v = w. \\ \end{align*} Thus, $w \in L(G)$ and $L \subseteq L(G)$ as required. $$\tag*{$\Box$}$$

Example 14.9. Consider the grammar $G$ defined by $$S \rightarrow (S) \mid SS \mid \varepsilon.$$ Here, our terminal symbols are $\{(,)\}$. The language that $G$ generates is the language of balanced parentheses. A detailed proof of this is given in Kozen, Chapter 20. This language goes one step further than the language of balanced binary words from the previous example, because here, the order of the symbols is important, rather than just the quantity.

This family of languages is also called the Dyck language, for Walther von Dyck, known for his contributions to combinatorial group theory. Dyck languages happen to have some interesting combinatorial and algebraic properties. In formal language theory, the Dyck languages give an important characterization for the context-free languages.

Theorem (Chomsky-Schützenberger). Every context-free language is a homomorphic image of the intersection of a Dyck language and a regular language.

The theorem is due to Chomsky and Schützenberger in 1963. A proof of the Chomsky-Schützenberger theorem can be found in Kozen, Chapter G.

Here, a Dyck language refers to a language of $n$ types of balanced parentheses. One can also interpret this as a language in which the alphabet symbols are matched or paired.

A homomorphism (also called morphism) is a function $h:\Sigma \to \Gamma$ such that $h(uv) = h(u)h(v)$. The basic idea behind morphisms is that they map words in $\Sigma^*$ to words in $\Gamma^*$, where $\Sigma$ and $\Gamma$ are alphabets, while preserving the structure of the word.

The Chomsky-Schützenberger theorem tells us that every context-free language can be described by a regular language, a Dyck language, and a morphism. Since regular languages are closed under homomorphisms (you can try proving this), what this tells us is that what really separates regular languages from context-free languages is the ability to express "nestedness", in the sense of brackets or trees.

Marcel-Paul Schützenberger was a French mathematician who was one of Chomsky's early collaborators and together they proved many important results about context-free languages. Besides his work with Chomsky, Schützenberger produced many influential results in formal languages and combinatorics on words.

Example 14.10. An easy example of a context-free language is the language of palindromes. A less obvious example of a context-free language is the language of non-palindromes. Consider the following grammar for the language of non-palindromes over the alphabet $\{a,b\}$. \begin{align*} S &\rightarrow aSa \mid bSb \mid aAb \mid bAa \\ A &\rightarrow aAa \mid aAb \mid bAa \mid bBb \mid a \mid b \mid \varepsilon \end{align*}

Here, the grammar generates a prefix and suffix simultaneously, "growing" the word from the middle. This guarantees a symmetry, so we can generate the $i$th and $i$-th-last symbol of the word at the same time. This is useful for the language of palindromes, but here, the productions $S \rightarrow aAb \mid bAa$ enforce the condition that for at least one $i$, the $i$th and $i$th-last symbols are not the same, which breaks the palindromic property. Since the only way to finish the word is by expanding the variable $A$, this grammar requires that there is at least one such asymmetry.

Note that while this example gives us one instance of a context-free language whose complement is also context-free, the context-free languages are not closed under complement.

As we've seen from our definition of context-free grammars, we never really need to specify a particular order for how we might apply production rules or the order in which we should replace variables. However, it's a good idea to have some sort of systematic approach to how we apply productions and the kinds of derivations that result from such an approach.

Definition 14.11. We say that a leftmost derivation is a derivation in which the leftmost variable is expanded at each step. Here's an example of a leftmost derivation of a string generated by the grammar for binary balanced words: $$S \Rightarrow 0S1S \Rightarrow 01S0S1S \Rightarrow 010S1S \Rightarrow 0101S \Rightarrow 0101.$$

We can define the rightmost derivation similarly. Since, as mentioned above, it doesn't matter which order variables are acted upon by production rules, we can conclude that every word generated by a CFG has a leftmost derivation. As it turns out, leftmost derivations have an interesting correspondence with parse trees. Parse trees are a way for us to visualize how a word is generated from a grammar.

Definition 14.12. Given a grammar $G$, a tree is a parse tree for $G$ if it satisfies the following conditions:

Any subtree of a parse tree is also a parse tree, except for a leaf.

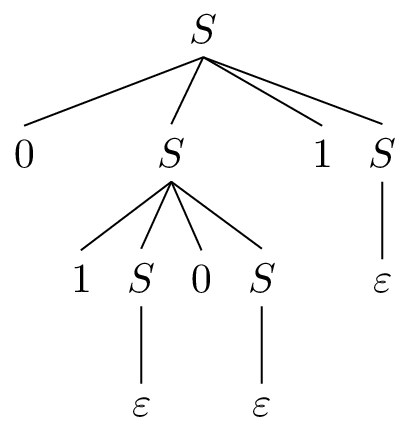

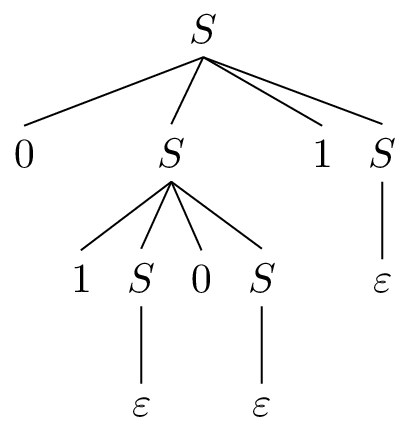

The yield of a parse tree is the concatenation of the symbols at the leaves of the tree from left to right. A full parse tree is one whose root is the start variable and the leaves are all labeled with terminals. Such a tree yields a word generated by the grammar. The following is the parse tree for the derivation we saw earlier.

As alluded to before, there is a bijection between the set of parse trees of a word and the set of its leftmost derivations. It isn't difficult to see that performing a depth-first traversal of a parse tree allows us to recover a leftmost derivation. There are a whole bunch of results that we could prove about this, but we're only interested in parse trees in how they can be used for other purposes.

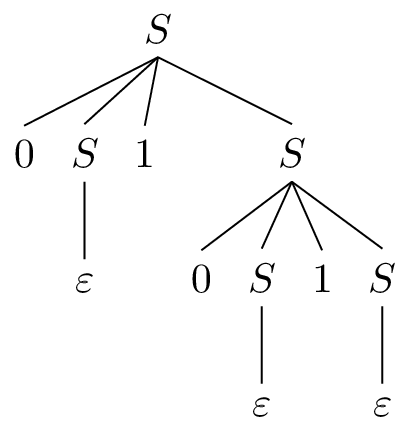

From what we just discussed, you might have noticed that we talked about sets of leftmost derivations of a single word. While it's not a surprise that there may be multiple ways to arrive at an arbitrary derivation of a word, it is a bit more interesting that even in restricting ourselves to leftmost derivations, we can come up with multiple derivations. For instance, here is a different derivation generating the same word from earlier: $$S \Rightarrow 0S1S \Rightarrow 01S \Rightarrow 010S1S \Rightarrow 0101S \Rightarrow 0101.$$

And here is its associated parse tree.

Definition 14.13. A grammar $G$ is ambiguous if there is a word $w \in L(G)$ with more than one leftmost derivation (or, equivalently, parse tree).

Obviously, we would like for our grammars to be unambiguous as much as is possible. For instance, we can do a bit of work and come up with an unambiguous grammar for balanced words as follows: \begin{align*} S &\to 0X1S \mid 1Y0S \mid \varepsilon \\ X &\to 0X1X \mid \varepsilon \\ Y &\to 1Y0Y \mid \varepsilon \end{align*}

Here, we've added variables to sort of corral the derivation into obeying some property. Here, we define new variables which obey the property that words they generate are totally balanced. A totally balanced word $w$ has the property that, in addition to satisfying $|w|_0 = |w|_1$, every prefix $w'$ of $w$ satisfies $|w'|_0 \geq |w'|_1$. This is the case for words generated by $X$, while the role of 0 and 1 are reversed for those words generated by $Y$. In this way, there is only one option for a particular string to be generated.

But what we would really like is to be able to take a grammar $G$ that is ambiguous and compute an equivalent grammar that is unambiguous. Unfortunately, we run into our first allusion to the idea of uncomputability here: there does not exist any algorithm which allows us to determine whether or not a grammar is ambiguous.

Furthermore, so far as we've discussed it, ambiguity is a property of a grammar, not a language. This raises the natural question of whether every context-free language has an unambiguous grammar. Unfortunately, the answer is no.

Definition 14.14 We say that a language is inherently ambiguous if there is no unambiguous grammar that generates it.

An example of an inherently ambiguous language is $$\{a^k b^k c^m \mid k,m \geq 0\} \cup \{a^k b^m c^m \mid k,m \geq 0 \}.$$ We won't be proving this, but to see how this might be the case, one can consider parse trees for the string $a^n b^n c^n$.