So far our discussion of the Myhill–Nerode theorem has focused on finite automata. However, our original motivation for Myhill–Nerode was to be able to say something about regular languages apart from finite automata. A natural follow-up question to that is what Myhill–Nerode says about regular expressions, if anything.

So let's take another look at regular expressions. While we have a pretty good understanding of how a DFA processes a word, we've been comparatively vague about how to think about the same process when matching a word against a regular expression. For example, how would we talk about a word that has partially matched a regular expression, in the same way that we could think about a DFA that's halfway through reading a word?

Suppose we have a regular expression $\mathtt{b(a+b)^*b}$ and I have the first letter of a word, $b$. I want to know what the possible matches are after I have read/matched a $b$. For this expression, it is easy to tell: it is anything that ends with a $b$. But we can also use our regular expression to describe this set: it is the original expression without the first factor, $\mathtt{(a+b)^*b}$.

This idea of a regular expression describing the set of strings after a partial match is called the derivatives of the regular expression. We can think of derivatives in the sense that we'll be considering a regular expression after it has partially matched a word, or capturing how the expression changes as it matches a word.

Since this is a set of strings, it's a language. What does this language look like?

The quotient of a language $L$ by a word $w$ is the language $$w^{-1} L = \{x \in \Sigma^* \mid wx \in L\}.$$ Much like how ideas like concatenation, powers, and factors (subwords) invoke analogies to multiplication, the use of the term quotient in this case can be thought of in the same way. The quotient $w^{-1} L$ is what's left when we "factor out" $w$ from the front of the word.

Note that we can take quotients on any language, not just those that are regular. However, a key observation that Janusz Brzozowski made in 1964 was that one can compute a quotient for a regular language via its regular expression. This is the genesis of the derivative.

First, we define the notion of the constant term of a regular expression.

The constant term of a regular expression $\mathbf r$, denoted $\mathsf c(\mathbf r)$, is defined by

The consequence of this definition can be summed up as follows.

For a regular expression $\mathbf r$, $$\mathsf c(\mathbf r) = \begin{cases} \varepsilon & \text{if $\varepsilon \in L(\mathbf r)$,} \\ \emptyset & \text{if $\varepsilon \not \in L(\mathbf r)$,} \end{cases}$$ and therefore, $L(\mathsf c(\mathbf r)) = L(\mathbf r) \cap \{\varepsilon\}$.

Observe that the result of $\mathsf c(\mathbf r)$ is a regular expression: it is either $\varepsilon$ or $\emptyset$, depending on whether or not $\varepsilon$ is matched by $\mathbf r$ (i.e. whether $\varepsilon$ is a member of the language of $\mathbf r$).

Let's consider the constant term of $\mathbf r = \mathtt{b(a+b)^*b}$. From the above proposition, this should be $\emptyset$, since $\varepsilon \not \in \mathbf r$. According to the definition, we have \begin{align*} \mathsf c(\mathtt{b(a+b)^*b}) &= \mathsf c(\mathtt b) \mathsf c(\mathtt{(a+b)^*b}) \\ &= \emptyset \cdot \mathsf c(\mathtt{(a+b)^*b}) \\ &= \emptyset \end{align*}

Why is this called the constant term? If we recall the regular expressions as an algebra, then we can think of $\emptyset$ as the "0" (additive identity) and $\varepsilon$ as the "1" (multiplicative identity) of the regular expressions. In this way, they are the constants.

Remember also that derivatives are regular expressions. The goal is to define a systematic way of computing the derivatives when given a regular expression. The following definition tells us how.

Let $\mathbf r$ be a regular expression. Then the derivative of $\mathbf r$ with respect to $a \in \Sigma$, denoted $\frac{d}{da}\left(\mathbf r\right)$, is defined recursively by

You might think this notation is kind of cute because it evokes the idea of derivatives from calculus. This notation is due to Jacques Sakarovitch, in Elements of Automata Theory, and I think it's nicer than the $D_a$ from the original Brzozowski paper.

The claim here is that if I have a regular expression $\mathbf r$, applying this definition for a symbol $a \in \Sigma$ will give me a regular expression $\frac{d}{da}\left(\mathbf r\right)$ that describes all words $x$ such that $ax$ was matched by $\mathbf r$. In other words, the derivative $\frac{d}{da}(\mathbf r)$ should describe the quotient $a^{-1}L(\mathbf r)$. This is a statement that requires proof.

Let $\mathbf r$ be a regular expression and let $a \in \Sigma$ be a symbol. Then, $$L\left(\frac{d}{da}\left(\mathbf r\right)\right) = a^{-1} L\left(\mathbf r\right) = \{x \in \Sigma^* \mid ax \in L\left(\mathbf r\right)\}.$$

We will prove this by induction on $\mathbf r$.

For the base cases, we have the following

Now, let $\mathbf s$ and $\mathbf t$ be regular expressions and assume that for all $a \in \Sigma$, $L\left(\frac{d}{da}\left(\mathbf s\right)\right) = a^{-1}L\left(\mathbf s\right)$ and $L\left(\frac{d}{da}\left(\mathbf t\right)\right) = a^{-1}L\left(\mathbf t\right)$.

First, we have $\mathbf r = \mathbf s + \mathbf t$: \begin{align*} L\left(\frac{d}{da}\left(\mathbf s + \mathbf t\right)\right) &= L\left(\frac{d}{da}\left(\mathbf s\right)+ \frac{d}{da}\left(\mathbf t\right)\right) \\ &= L\left(\frac{d}{da}\left(\mathbf s\right)\right) \cup L\left(\frac{d}{da}\left(\mathbf t\right)\right) \\ &= a^{-1} L\left(\mathbf s\right) \cup a^{-1} L\left(\mathbf t\right) \\ &= \{x \in \Sigma^* \mid ax \in L\left(\mathbf s\right)\} \cup \{x \in \Sigma^* \mid ax \in L\left(\mathbf t\right)\} \\ &= \{x \in \Sigma^* \mid ax \in L\left(\mathbf s\right) \cup L\left(\mathbf t\right)\} \\ &= \{x \in \Sigma^* \mid ax \in L\left(\mathbf s + \mathbf t\right)\} \\ &= a^{-1} L\left(\mathbf s + \mathbf t\right) \end{align*}

Next, we have $\mathbf r = \mathbf s \mathbf t$. We will define $L\left(\mathbf s\right)^\circ = L\left(\mathbf s\right) - \{\varepsilon\}$. Note that $L\left(\mathbf s\right) = L\left(\mathbf s\right)^\circ \cup L\left(\mathsf c\left(\mathbf s\right)\right)$. \begin{align*} a^{-1} L\left(\mathbf s \mathbf t\right) &= \{x \in \Sigma^* \mid ax \in L\left(\mathbf s\right)L\left(\mathbf t\right)\} \\ &= \{x \in \Sigma^* \mid ax \in \left(L\left(\mathbf s\right)^\circ \cup L\left(\mathsf c\left(\mathbf s\right)\right)\right) L\left(\mathbf t\right)\} \\ &= \{x \in \Sigma^* \mid ax \in \left(L\left(\mathbf s\right)^\circ L\left(\mathbf t\right) \cup L\left(\mathsf c\left(\mathbf s\right)\right) L\left(\mathbf t\right)\right)\} \\ &= \{x \in \Sigma^* \mid ax \in L\left(\mathbf s\right)^\circ L\left(\mathbf t\right)\} \cup \{x \in \Sigma^* \mid ax \in L\left(\mathsf c\left(\mathbf s\right)\right) L\left(\mathbf t\right)\} \\ &= \{uv \in \Sigma^* \mid au \in L\left(\mathbf s\right)^\circ, v \in L\left(\mathbf t\right)\} \cup a^{-1} L\left(\mathsf c\left(\mathbf s\right)\mathbf t\right) \\ &= \left(a^{-1} L\left(\mathbf s\right)^\circ\right) L\left(\mathbf t\right) \cup a^{-1} L\left(\mathsf c\left(\mathbf s\right)\mathbf t\right) \\ \end{align*} Here, we make the observation that $a^{-1} L\left(\mathbf s\right)^\circ = a^{-1} L\left(\mathbf s\right)$, since $a^{-1} \{\varepsilon\} = \emptyset$. Therefore, $\left(a^{-1} L\left(\mathbf s\right)^\circ\right) L\left(\mathbf t\right) = L\left(\frac{d}{da}\left(\mathbf s\right)\mathbf t\right)$. We also observe that $a^{-1} L\left(\mathsf c\left(\mathbf s\right) \mathbf t\right) = L\left(\mathsf c\left(\mathbf s\right) \frac{d}{da}\left(\mathbf t\right)\right)$, since $\mathsf c\left(\mathbf s\right)$ is either $\emptyset$ or $\varepsilon$. Putting this together, we continue, to get the following: \begin{align*} a^{-1} L\left(\mathbf s \mathbf t\right) &= \left(a^{-1} L\left(\mathbf s\right)^\circ\right) L\left(\mathbf t\right) \cup a^{-1} L\left(\mathsf c\left(\mathbf s\right)\mathbf t\right) \\ &= L\left(\frac{d}{da}\left(\mathbf s\right) \mathbf t\right) \cup L\left(\mathsf c\left(\mathbf s\right) \frac{d}{da}\left(\mathbf t\right)\right) \\ &= L\left(\frac{d}{da}\left(\mathbf s\right) \mathbf t + \mathsf c\left(s\right) \frac{d}{da}\left(\mathbf t\right)\right) \\ &= L\left(\frac{d}{da}\left(\mathbf s \mathbf t\right)\right) \end{align*}

Therefore, $L\left(\frac{d}{da}\left(\mathbf s \mathbf t\right)\right) = a^{-1}L\left(\mathbf s \mathbf t\right)$.

Finally, we have $\mathbf r = \mathbf s^*$. \begin{align*} L\left(\frac{d}{da}\left(\mathbf s^*\right)\right) &= L\left(\frac{d}{da}\left(\mathbf s\right)\mathbf s^*\right) \\ &= \left(a^{-1}L\left(\mathbf s\right)\right) L\left(\mathbf s^*\right) \\ &= \{xy \in \Sigma^* \mid ax \in L\left(\mathbf s\right), y \in L\left(\mathbf s^*\right)\} \\ &= \{w \in \Sigma^* \mid aw \in L\left(\mathbf s^*\right)\} \\ &= a^{-1} L\left(\mathbf s^*\right) \end{align*}

Let's compute the derivatives for the regular expression $\mathbf r = \mathtt{b(a+b)^*b}$. We saw above already that the derivative with respect to $b$ should be $\mathtt{(a+b)^*b}$, but let's verify that using our definition. We have \begin{align*} \frac{d}{db} \mathbf r &= \frac{d}{db} \mathtt{b(a+b)^*b} \\ &= \frac{d}{db}(\mathtt b) \cdot \mathtt{(a+b)^*b} + \mathsf c(\mathtt b) \cdot \frac{d}{db} \mathtt{(a+b)^*b} \\ &= \varepsilon \cdot \mathtt{(a+b)^*b} + \emptyset \cdot \frac{d}{db} \mathtt{(a+b)^*b} \\ &= \mathtt{(a+b)^*b} \end{align*}

Then for $a$, we have \begin{align*} \frac{d}{da} \mathbf r &= \frac{d}{da} \mathtt{b(a+b)^*b} \\ &= \frac{d}{da}(\mathtt b) \cdot \mathtt{(a+b)^*b} + \mathsf c(\mathtt b) \cdot \frac{d}{da} \mathtt{(a+b)^*b} \\ &= \emptyset \cdot \mathtt{(a+b)^*b} + \emptyset \cdot \frac{d}{da} \mathtt{(a+b)^*b} \\ &= \emptyset \end{align*}

Now, for now, let's keep going. We can keep on taking derivatives of derivaitves. Obviously, no matter what we do, we have $\frac{d}{dx}\left(\frac{d}{da} \mathbf r \right) = \frac d {da} \mathbf r$ for all $x \in \Sigma$. But we could get something interesting from $\frac d{db} \mathbf r$. For $a$, we have \begin{align*} \frac{d}{da} \left(\frac d{db} \mathbf r \right) &= \frac{d}{da} \mathtt{(a+b)^*b} \\ &= \frac{d}{da} (\mathtt{(a+b)^*}) \mathtt b + \mathsf c(\mathtt{(a+b)^*}) \frac d{da} \mathtt b \\ &= \frac{d}{da} (\mathtt{a+b}) \mathtt{(a+b)^*} \mathtt b + \varepsilon \emptyset \\ &= \left(\frac d{da} \mathtt a + \frac d{da} \mathtt{b} \right) \mathtt{(a+b)^*} \mathtt b \\ &= \left(\varepsilon + \emptyset \right) \mathtt{(a+b)^*} \mathtt b \\ &= \mathtt{(a+b)^*} \mathtt b \\ \end{align*}

Then for $b$, we will skip some steps we've seen above and we end up with \begin{align*} \frac{d}{db} \left(\frac d{db} \mathbf r \right) &= \frac{d}{db} \mathtt{(a+b)^*b} \\ &= \frac{d}{db} (\mathtt{(a+b)^*}) \mathtt b + \mathsf c(\mathtt{(a+b)^*}) \frac d{db} \mathtt b \\ &= \frac{d}{db} (\mathtt{a+b}) \mathtt{(a+b)^*} \mathtt b + \varepsilon \varepsilon \\ &= \mathtt{(a+b)^*} \mathtt b + \varepsilon \end{align*}

The fact that we can continue to compute derivatives of derivatives suggests that we can define an obvious extension for derivatives to words. Let $w = ax$, where $x \in \Sigma^*$ and $a \in \Sigma^*$. Then $\frac{d}{dw}\left(\mathbf r\right) = \frac{d}{dx}\left(\frac{d}{da}\left(\mathbf r\right)\right)$. This leads to the following very interesting observation.

Let $\mathbf r$ be a regular expression and let $w \in \Sigma^*$. Then $w \in L\left(\mathbf r\right)$ if and only if $\varepsilon \in L\left(\frac{d}{dw}\left(\mathbf r\right)\right)$.

We have $\varepsilon \in L\left(\frac{d}{dw}\left(\mathbf r\right)\right) \iff \varepsilon \in w^{-1} L\left(\mathbf r\right) \iff w \varepsilon \in L\left(\mathbf r\right) \iff w \in L\left(\mathbf r\right).$

Let's see where this idea takes us. We don't yet have any derivatives that match $\varepsilon$, but we still have a derivative we haven't seen before: $\frac d{db} \frac d{db} \mathbf r$, or $\frac d{dbb} \mathbf r$. But since $\frac d{dbb} \mathbf r = \frac d{db} \mathbf r + \varepsilon$, computing the next derivatives is pretty straightforward. We have \begin{align*} \frac{d}{da} \left(\frac d{dbb} \mathbf r \right) &= \frac{d}{da} (\mathtt{(a+b)^*b + \varepsilon}) \\ &= \frac{d}{da} \mathtt{(a+b)^*b + \frac d{da} \varepsilon} \\ &= \mathtt{(a+b)^*b + \emptyset} \\ &= \mathtt{(a+b)^*} \mathtt b \end{align*} \begin{align*} \frac{d}{db} \left(\frac d{dbb} \mathbf r \right) &= \frac{d}{db} (\mathtt{(a+b)^*b + \varepsilon}) \\ &= \frac{d}{db} \mathtt{(a+b)^*b + \frac d{db} \varepsilon} \\ &= \mathtt{(a+b)^*b + \varepsilon + \emptyset} \\ &= \mathtt{(a+b)^*} \mathtt b + \varepsilon \end{align*}

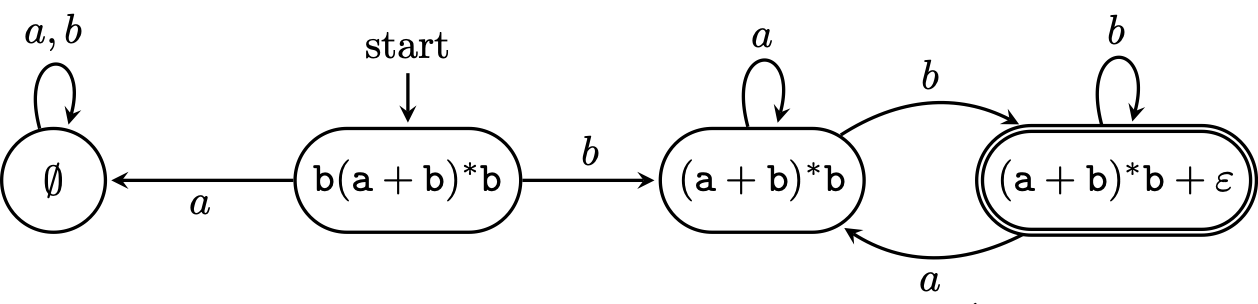

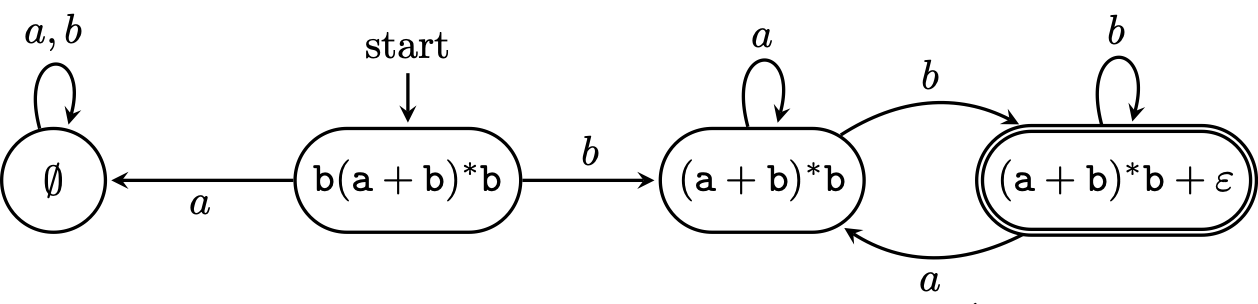

Now, we don't see any new derivatives. This seems like the right outcome, but when we started this process, we didn't really have any guarantees that we could ever stop. That we got here is not at all obvious. But Brzozowski showed that this is guaranteed to happen.

Every regular expression $\mathbf r$ has a finite number of distinct derivatives.

This has a few implications. First of all, to compute all the derivatives of a regular expression $\mathbf r$, we can do what we've done and just keep on computing them until we start getting the same expressions. Obviously, this is not the most efficient way to do this, but we're guaranteed the computation will stop.

But thinking back to the theoretical point of view, recall that these are sets of suffixes of words in our language. And when we consume prefixes of words belonging to these sets, we get different sets, but there are only finitely many of these sets. And finally, we know that those sets that contain $\varepsilon$ correspond to words in the language. This looks suspiciously like the following.

\begin{tikzpicture}[shorten >=1pt,on grid,node distance=3cm,>=stealth,thick,auto]

\node[state,initial above,rounded rectangle] (0) {$\mathtt{b(a+b)^*b}$};

\node[state,rounded rectangle] (1) [left of=0]{$\mathtt{\emptyset}$};

\node[state,rounded rectangle] (2) [right of=0] {$\mathtt{(a+b)^*b}$};

\node[state,accepting,rounded rectangle] (3) [right of=2]{$\mathtt{(a+b)^*b + \varepsilon}$};

\path[->]

(0) edge node {$a$} (1)

(0) edge node {$b$} (2)

(1) edge [loop above] node {$a,b$} (1)

(2) edge [loop above] node {$a$} (2)

(2) edge [bend left] node {$b$} (3)

(3) edge [loop above] node {$b$} (3)

(3) edge [bend left] node {$a$} (2)

;

\end{tikzpicture}

In fact, the derivatives of a regular expression correspond to the Myhill–Nerode equivalence classes for the language of the regular expression. But we also saw that the Myhill–Nerode equivalence classes correspond to the states of the minimal DFA for its language. So this DFA that we got from our derivatives is the minimal DFA.

So we have the final piece of the puzzle: regular expressions do have a connection with Myhill–Nerode after all. And this is even more convincing than our proofs of Kleene's Theorem. From that perspective, it simply looked like DFAs and regular expressions happened to represent the same kinds of objects. But the connection that Myhill–Nerode provides links both to an intrinsic property of the language.

Unlike the Thompson construction we saw before or the position automaton I mentioned briefly, this directly gives us a DFA. However, if we want to use derivatives to compute an NFA, one way to do this was proposed by Antimirov, who, in 1996, defined partial derivatives of a regular expression. This method, like the position automaton that was mentioned earlier, produces an NFA of roughly the same size as the length of the regular expression.

This method lends itself quite nicely to implementation in functional programming languages. In fact, Stuart Kurtz's CMSC 28000 course notes on derivatives pointed me to a paper detailing the use of derivatives in the regular expression implementations of Standard ML and Scheme (which some of you probably know now as Racket).

How I first learned about derivatives was as an undergrad stumbling upon the first version of Matt Might's post about his paper on using derivatives for parsing. It's quite accessible if you have some formal languages and algorithms knowledge. He also has his own implementation of a very simple regular expression matcher that uses derivatives in Scheme, which should be easy enough to follow if you've done some functional programming.