Set Cover

One of the things that we've seen and will continue to see is that there are some surprising connections between problems that seem very different. For instance, we will consider the following problem.

The set cover problem is: Given a universe $U$ of $n$ elements, a collection of subsets $\mathcal X = \{X_1, \dots, X_m\}$ of $U$, and an integer $k \geq 0$, does there exist a collection of at most $k$ subsets $X_i$, $1 \leq i \leq m$, whose union is equal to $U$?

Consider the universe $U = \{1,2,3,4,5,6,7,8,9\}$ and the sets

- $X_1 = \{1,2,3,5\}$

- $X_2 = \{2,8,9\}$

- $X_3 = \{3,4,6\}$

- $X_4 = \{5,6,7,8\}$

- $X_5 = \{4,9\}$

- $X_6 = \{1,3,5\}$

Clearly, the easy answer is that choosing every set is a set cover for $U$, so we really want the smallest set cover for $U$. Such a set cover is $X_1, X_4, X_5$.

This is a problem about sets, so at first glance this doesn't seem like it has anything to do with the graph problems that we've been looking at. But from its name, you can guess that set cover is a covering problem like vertex cover and we remember that graphs (like most things in mathematics) are made of sets, so maybe there is a connection after all.

Vertex Cover $\leq_P$ Set Cover.

We are given an instance of Vertex Cover $(G,k)$ with $G = (V,E)$ and $k \geq 0$. We will show how to solve Vertex Cover by constructing an instance of Set Cover $(U,\mathcal X,k)$.

Let our universe $U$ be the set of edges $E$. Then for each vertex $v \in V$, we define the subset

\[X_v = \{e \in E \mid \exists u \in V, e = (u,v) \}.\]

In other words, $X_v$ is the subset of edges that are incident to $v$.

Now, we want to show that $G$ has a vertex cover of size $k$ if and only if $U$ contains a set cover by sets in $\mathcal X$ of size $k$.

Suppose that $C \subseteq V$ is a vertex cover of size $k$ in $G$. Then we choose $\mathcal Y = \{X_v \mid v \in C\}$ as our set cover. Since $C$ is a vertex cover, every edge in $G$ is adjacent to at least one vertex in $C$. Therefore, every edge is a member of some set in $\mathcal Y$. And by definition, $|\mathcal Y| = k$.

Now, suppose that $\mathcal Y \subseteq S$ is a set cover of size $k$ for $U$. Then we take $C = \{v \mid X_v \in \mathcal Y\}$ and claim that $C$ is a vertex cover. To see this, we note that since $\mathcal Y$ is a set cover, every element of $U$ is in some set in $\mathcal Y$. Therefore, every edge is a member of some set in $\mathcal Y$.

Then, we can solve Vertex Cover by constructing for an instance $(G,k)$ an instance $(U,\mathcal X,k)$ and running an algorithm for Set Cover on $(U,\mathcal X,k)$.

In other words, we've shown that if we can solve this problem on sets, then we can solve a problem on graphs. But what if we wanted to solve Independent Set? It turns out that reduction is transitive.

If $A \leq_P B$ and $B \leq_P C$, then $A \leq_P C$.

Satisfiability

So you might say, okay, it makes sense to reduce Set Cover to Vertex Cover because they're both about covers and you can kind of generalize things to graphs. But what if we considered something that wasn't about covering at all?

Here, we will need to recall some definitions from propositional logic.

A boolean variable is a variable that can be assigned a value from $\{0,1\}$ (or $\{T,F\}$). A literal is a boolean variable or its negation. A clause is a disjunction of literals. We say a boolean formula $\varphi$ is in conjunctive normal form (CNF) if $\varphi$ is a conjunction of clauses.

Consider the formula

\[\varphi = (\neg x_1 \vee x_2 \vee x_3) \wedge (x_1 \vee \neg x_2 \vee x_3) \wedge (\neg x_1 \vee x_2 \vee x_4).\]

This formula is in conjunctive normal form and has

- four variables: $x_1,x_2,x_3,x_4$,

- six literals: $x_1, \neg x_1, x_2, \neg x_2, x_3, x_4$,

- three clauses: $(\neg x_1 \vee x_2 \vee x_3), (x_1 \vee \neg x_2 \vee x_3), (\neg x_1 \vee x_2 \vee x_4)$.

Recall that propositional formulas are logical statements that assert the truth of some statement. Usually this is mathematical in nature. We are interested in determining whether such a statement is ever true. In other words, is there a way for us to assign the variables in such a way that the formula becomes true? This is called the satisfiability problem.

The satisfiability (SAT) problem is: Given a propositional formula $\varphi$, does it have a satisfying truth assignment? The 3-SAT problem is: Given a propositional formula $\varphi$ in conjunctive normal form, where each clause contains exactly three literals, does it have a satisfying truth assignment?

Satisfiability is a basic problem in mathematical logic and has obvious applications in things like circuit design. However, as mentioned before, there are a number of mathematical statements that one can express in propositional logic, so being able to solve this would mean being able to determine the satisfiability of any statement that can be expressed as a propositional statement.

We will show something that is maybe a bit surprising.

3-SAT $\leq_P$ Independent Set.

Given an instance $\varphi$ of 3-SAT, where $\varphi$ has $k$ clauses, we will construct an instance $(G,k)$ of Independent set that has an independent set of size $k$ if and only if $\varphi$ is satisfiable.

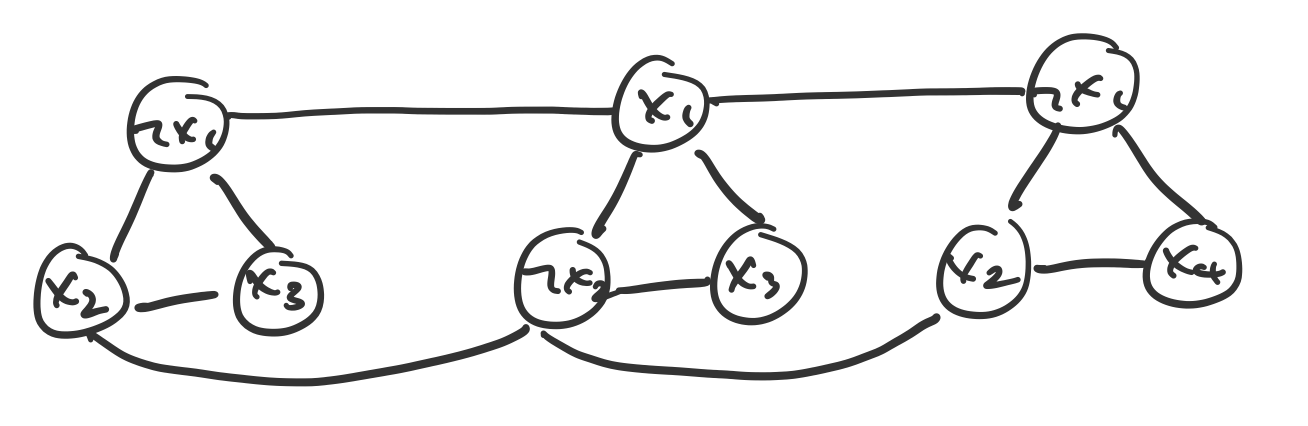

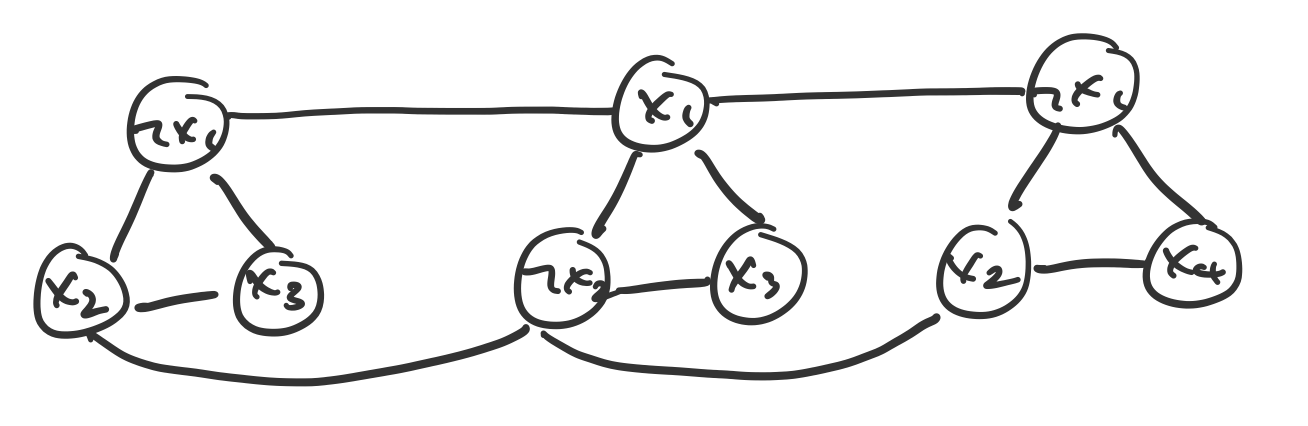

Our construction is as follows. For each clause $C = x_i \vee x_j \vee x_k$ in $\varphi$, we construct a triangle. Then, we add an edge between each literal and its negations in the other clauses.

Consider the following graph, constructed by using the formula from Example 18.6. Note that each triangle corresponds to a clause and that the literals $x_1$ and $x_2$ have edges to their negations, $\neg x_1$ and $\neg x_2$.

We will show that $\varphi$ is satisfiable if and only if $G$ contains an independent set of size $k$, where $k$ is the number of clauses in $\varphi$.

First, suppose $\varphi$ is satisfiable and consider a satisfying assignment. This means that in every clause, at least one of the literals is true. Then we choose one of these literals from each clause and these form an independent set $S$.

To see that they do, it is clear that only one vertex can be chosen from each triangle. Then all that remains is that there are no edges joining two vertices in different triangles. But the only way this can happen is between two literals $x_i$ and $\neg x_i$, which can't be the case in a satisfying assignment. therefore, $S$ is an independent set.

Next, suppose that $G$ contains an independent set $S$ of size $k$. Since $S$ is independent, it must contain exactly one vertex from each triangle. We set the literals corresponding to these vertices to True, as long as they are consistent. Then this means every clause evaluates to true, so $\varphi$ is satisfied.

With that we have the following relationships among the problems we've discussed so far:

\[\mathrm{3SAT} \leq_P \mathrm{Independent\ Set} \leq_P \mathrm{Vertex\ Cover} \leq_P \mathrm{Set\ Cover}.\]

Classification of problems

So at this point, we have the tools to show that there are problems that are about as hard as each other, but we still don't know whether they have efficient algorithms or whether we should expect them to.

Ultimately, what we want to do is classify those problems that we know have efficient algorithms. But first, what is a problem? We have a vague notion of what this is, but we would like to formalize this notion. It might be surprising that we would want to formalize what a problem is mathematically or that we can even do this, but this is really what computer science is about: the mathematics of how to solve problems.

Recall that we mentioned that a decision problem is one that, when given an input, answers a Yes or no. This sounds suspiciously like a function, so we might say that a problem is some function that maps inputs from $\mathbb N$ to $\{Y,N\}$. But as we've seen above, our domains can get much more complicated than $\mathbb N$.

Viewing such problems as functions is a good start, but we want something more general to work with. In the above examples, there's no unification in terms of the number of inputs or the domains of the functions, which makes it a bit difficult to talk about the two questions in the same way.

One of the first insights you will have internalized already is that we can represent pretty much anything we would like with the proper encoding. Generally speaking, this means that we can treat any input as a string. We can even treat "multiple" inputs, as in the case of our graph problem, as a single string, by encoding the delimiters appropriately as well. In this way, we can now define decision problems a functions from strings to Yes or No.

Let $\Sigma$ be an alphabet. A decision problem is a Boolean function $P:\Sigma^* \to \{0,1\}$ that maps input strings over $\Sigma$ to a single bit.

Another way to look at a Boolean function is as a set $S$, where a string $x$ is in $S$ if $P(x) = 1$ and $x \not \in S$ if $P(x) = 0$.

Let $\Sigma$ be an alphabet. A decision problem is a set $P \subseteq \Sigma^*$. A string $x$ is a Yes instance of $P$ if and only if $x \in P$.

Then we can treat an algorithm as a function that tells us whether an input is a Yes or No instance and we can associate it with a running time.

An algorithm $A$ solves a problem $P$ if

\[A(x) = \begin{cases} \mathrm{Yes} & \text{if $x \in P$,} \\ \mathrm{No} & \text{if $x \not \in P$.} \end{cases}\]

We say $A$ runs in polynomial time if for every input string $x$, $A(x)$ terminates in $\leq p(|x|)$ steps, where $p$ is a polynomial.

This allows us to define a class of problems based on the time complexity of the algorithms that solve them.

The class $\mathbf P$ is the class of decision problems for which there exists a polynomial time algorithm.

What problems are in $\mathbf P$? Almost every problem we've seen so far is a problem in $\mathbf P$, because we have polynomial time algorithms that solve them (with a few exceptions). As a reminder, the following problems that we've seen that are in $\mathbf P$ are

- Stable matching

- Interval scheduling

- Single-source shortest path

- Minimum spanning tree

- Sorting

- Closest pair of points in a plane

- Integer mulitiplication

- Matrix multiplication

- Weighted interval scheduling

- Edit distance

- Single-destination shortest paths

- RNA secondary structure prediction

- Maximum flow

- Minimum cut

- Bipartite matching

However, we've also seen a few problems where we don't know whether there are polynomial time algorithms.

- 0-1 knapsack

- Independent set

- Vertex cover

- Set cover

- Satisfiability

- 3-SAT

A big problem with this is that we don't know whether there is or isn't an efficient algorithm for these problems. As mentioned before, a definitive answer one way or the other would be helpful, but we don't have one. But maybe we can try something less ambitious first. What if, instead of trying to solve the problem, we could check whether a proposed solution is correct?